I started using Decopy AI Humanizer to make AI-written content sound more natural, but I’m not sure if it’s actually improving readability or helping with AI detection. Some of the output still feels awkward, and I don’t want to waste more time or money if it’s not worth it. I’m looking for honest Decopy AI Humanizer reviews, real user feedback, and advice on whether there’s a better alternative.

Decopy AI Humanizer

I spent some time with Decopy AI Humanizer, mostly because the free tier looked kind of absurd at first glance. You get 500 free runs, and each request takes up to 50,000 characters. On paper, that is a lot more generous than most tools in this space. It also includes eight tone options, nine purpose presets, and a sentence-by-sentence rewrite button, which helped when one line came out awkward and I wanted to reroll only that part instead of the whole block.

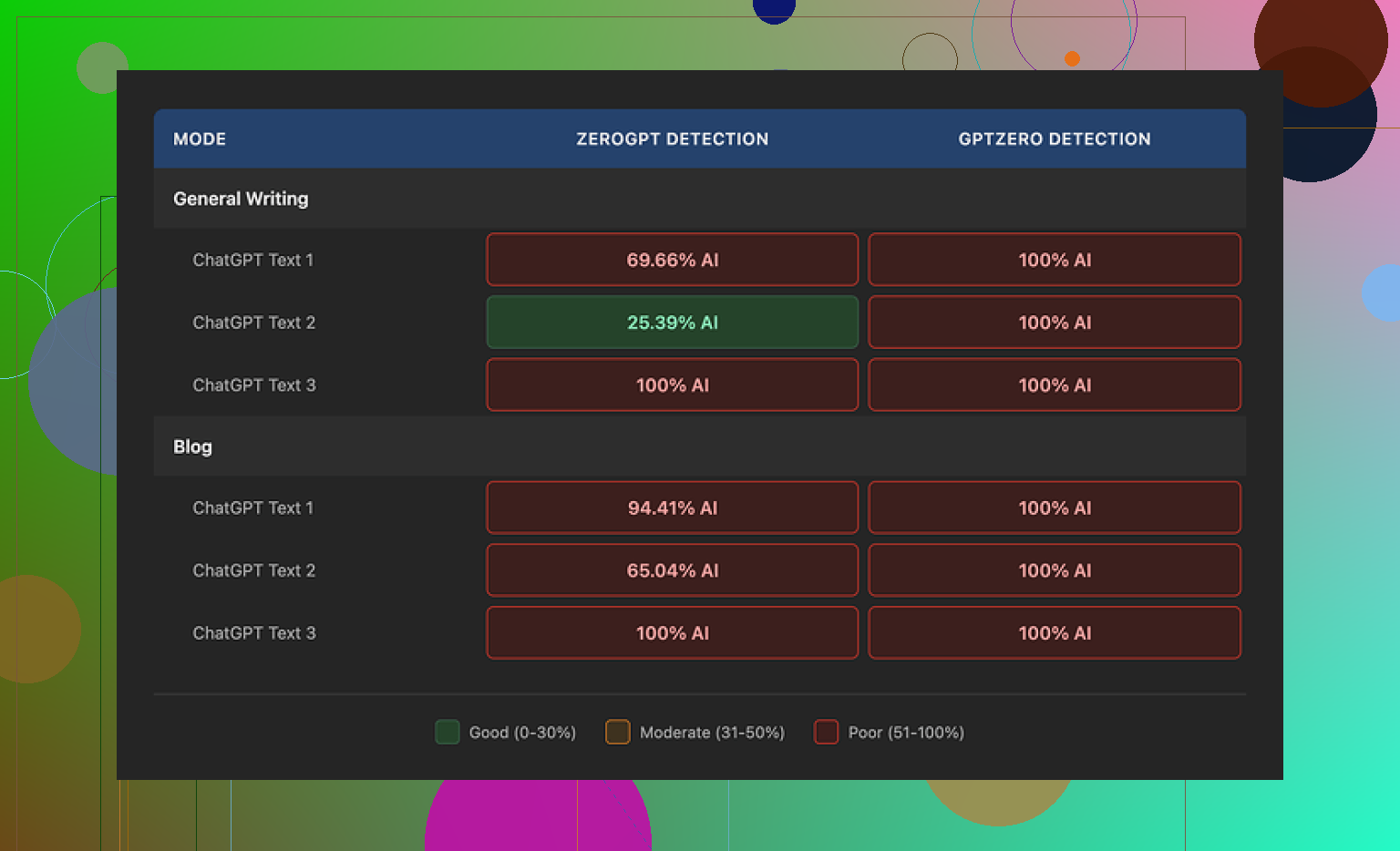

The problem showed up once I ran the outputs through detectors. The feature list looked good. The results did not. In my tests, GPTZero flagged every output as 100% AI in both General Writing and Blog mode. ZeroGPT bounced around more, somewhere between roughly 25% and 100%, depending on the passage. So if your main goal is getting past AI detection, this one felt weak fast.

One area where Decopy did better than I expected was grammar. I did not see it breaking sentences or introducing weird errors the way tools like UnAIMyText and HumanizeAI.io often do. The text stayed clean. For raw readability, I would put Blog mode around 7/10, and General Writing a bit higher at 7.5/10.

Still, I ran into the same issue over and over. It simplified too much. Blog mode in particular felt like it was written for a small kid, not for a normal reader. General Writing mode was less childish, though it still dropped in phrases like 'digital stuff' and 'totally changing tech,' which made the output feel cheap. At least it usually kept the length close to the source, so it did not chop everything down into half-size summaries.

I also checked the privacy side because these tools tend to stay vague there. Decopy’s policy gives a clear retention window of three months and says it follows GDPR and CCPA rules. What I did not find was a clear explanation of what happens to the text you paste in for rewriting. For me, that missing detail matters more than the compliance labels.

After running side-by-side tests, Clever AI Humanizer turned in stronger results for humanization, and I did not have to pay for it.

I had a similar result. Decopy cleaned up grammar, but it did not fix flow in a consistent way. The text read smoother line to line, yet the full paragraph still felt off. Word choice was the main issue for me. It swaps in plain phrases where you need specific ones, so your writing loses tone fast.

I slightly disagree with @mikeappsreviewer on one point. I did not find Blog mode unusable. For simple posts, it was okay. For anything with nuance, it flattened the message too much.

What helped me was treating it like a first pass, not a final draft. I got better output when I:

- fed it shorter sections, around 150 to 250 words

- used the sentence reroll on only the weird lines

- edited the intro and outro myself

- put back domain terms it stripped out

For AI detection, my results were mixed and kinda messy. One detector scored low, another flagged the same text hard. So I would not trust Decopy for detection-proofing. If your goal is readability, it helps some. If your goal is passing detectors, nah. You still need manual edits, typo-level variation, and more human phrasing or it feels too cleaned up and samey.

I’m a bit more skeptical than @mikeappsreviewer and @himmelsjager on the readability part. Yeah, Decopy can make text look cleaner at first glance, but “cleaner” is not always “more human.” A lot of its rewrites feel sanded down in a way real people usually don’t write. Humans leave texture. Decopy often removes it.

What stood out to me was rhythm. The sentences end up being too evenly paced, too safe, too balanced. That’s exactly the kind of thing that still triggers the “this was processed” vibe even if the grammar is fine. So if you’re reading it and thinking “why does this still feel awkward?”, that’s probably it. It’s not just wording. It’s cadence.

I also wouldn’t put much faith in detector scores either way. Those tools contradict each other constantly, so using Decopy just to chase a lower percentage is kinda a trap. You can get a lower score and still have text that sounds fake. That’s the bigger problem imo.

Where I mildly disagree with both of them is this: I don’t think Decopy is useless for flow. It can help when your source text is robotic and repetitive. But it works more like a surface polisher than a real editor. If the original piece has weak structure, vague claims, or generic transitions, Decopy won’t magically fix that stuff.

My take: use it only after you’ve already rewritten the piece in your own voice a little. If you use it on raw AI copy, it usually just gives you “AI copy wearing a hoodie.” Less stiff, still suspicious lol.