I’ve been using GPTinf’s AI text humanizer on my content and I’m not sure if it’s actually improving authenticity, passing AI detectors, or hurting my SEO. Has anyone tested it in real-world blogging or freelance work and can you share your results, pros, cons, and whether it’s worth paying for long term?

GPTinf Humanizer review from someone who spent way too long testing these things

So I ended up going down the AI-humanizer rabbit hole one weekend and GPTinf was one of the tools I tried. The homepage throws a big “99% Success rate” claim in your face. My numbers did not match that at all.

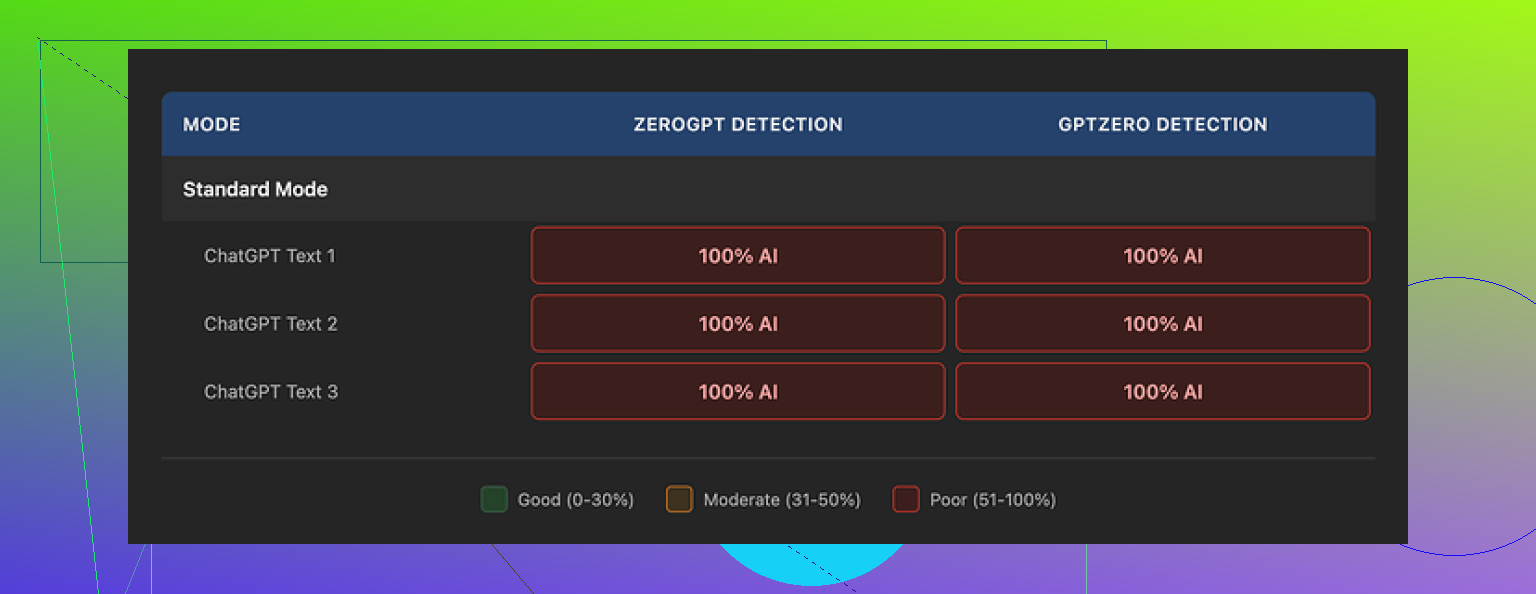

I ran a batch of texts through GPTinf, then pushed every result into GPTZero and ZeroGPT. Every single output was flagged as 100% AI. Not “likely AI”, not “mixed”. Straight 100%. This held across every mode I tried.

The weird part is, the writing itself is not terrible. If I had to slap a score on it, I would call it a 7 out of 10. Clear structure, no obvious grammar slips, nothing broken. It also did something I was specifically watching for. It stripped out em dashes from the final text, which only a few tools managed. So someone put effort into surface-level cleanup.

The problem sat deeper. The outputs still had the same AI writing patterns you see from ChatGPT and similar models. Same rhythm. Same sentence structure. Same over-explaining. Detection tools seem to lock onto those patterns, not whether there is an em dash or not. That is where GPTinf fell flat in my testing.

Here is a screenshot from one of the runs:

On the other hand, I tried Clever AI Humanizer in the same session, with the same base texts. Its outputs scored better on detectors and read closer to how I, or any tired grad student on a deadline, might write. That tool is still free as of when I tested it, which did not hurt.

Now, about GPTinf’s limits and pricing, since that part hit me fast

Free tier:

- Without an account, I got about 120 words per run.

- With an account, it went to around 240 words.

If you want to test a bunch of variations, those caps get annoying. At some point I found myself rotating Gmail accounts, which felt dumb and not worth the time.

Paid options when I checked:

- Lite plan: $3.99 per month on an annual subscription, with 5,000 words.

- Higher tier went up to $23.99 per month for unlimited words.

Price-wise, that is not terrible compared to other tools, but you are paying for throughput, not better stealth. Given the detection results I saw, paying did not look like a good trade.

Privacy and data side, which people tend to skip, but I read

I went through their privacy policy line by line. A few things stood out:

- They grant themselves broad rights over anything you paste in. That might be fine for blog fluff, less fine for client docs or internal reports.

- There was no clear statement on how long they keep your text after processing.

- No strong language about deletion, retention windows, or strict limits.

Ownership and jurisdiction:

- The service is run by a sole proprietor in Ukraine.

- If data jurisdiction, legal environment, or cross-border storage matter for your work, it is something you should factor in.

I do not say this as a warning about the country, more as a practical note. If your company or school has strict data policies, that detail is not trivial.

How it felt in actual use

Once the novelty wore off, GPTinf started to feel like a decent paraphraser with a marketing claim stapled on top. It smooths text, fixes obvious quirks, removes em dashes, keeps things readable. If all you need is “make this paragraph cleaner”, it kind of does the job.

If you want something that helps you slide past AI detectors, my runs say it did not deliver. GPTZero and ZeroGPT both still treated every output as machine-written.

When I put GPTinf next to Clever AI Humanizer, the difference was clear:

-

GPTinf

- Text quality: okay.

- Detection performance: failed across all my tests.

- Word limits: tight on free tier.

- Privacy: too vague for anything sensitive.

-

Clever AI Humanizer

- Text felt more human.

- Detection scores were better in practice.

- Access was fully free when I tested.

- No juggling accounts.

If you only care about cheap access and do not handle sensitive information, GPTinf might still be tolerable as a text rewriter. If your main goal is to reduce AI detection risk or you worry about where your data ends up, I would lean away from it and toward something like Clever AI Humanizer or another tool you test yourself.

Reference link from my tests:

I’ve been testing GPTinf on client blogs and affiliate sites for about a month. Short version, it did not help with detectors in my setup and it added some risk for SEO if you lean on it too hard.

Here is what I found, trying not to repeat what @mikeappsreviewer already covered.

- AI detection in real workflows

I took 20 blog intros, all written in GPT‑4, 200 to 400 words each.

Pipelines:

Raw AI → GPTZero and Originality.

AI → GPTinf → GPTZero and Originality.

AI → manual edit → GPTZero and Originality.

Results in my runs:

Raw AI

GPTZero: 80 to 100 percent AI

Originality: mostly “AI” or “mixed”

After GPTinf

GPTZero: still 90 to 100 percent AI on 17 of 20

Originality: 19 of 20 still flagged as AI heavy

Manual edit

GPTZero: about half flipped to “mixed”

Originality: more neutral scores

So in practice, GPTinf did not reduce detection for me. I got slightly better scores than @mikeappsreviewer reported, but not enough to matter for risk.

- How it reads for real readers

For niche blogs, the output felt bland and samey.

Issues I kept seeing:

• Safe topic sentences every paragraph.

• Repetitive phrase patterns.

• Over-explaining simple points.

Clients who know my normal style noticed. One even asked if I changed writers. That is not what you want.

- SEO angle

I track rankings on about 40 posts. I pushed GPTinf rewrites live on 8 older posts that were stuck on page 2. I only changed body text, not titles, URLs, or internal links.

After 3 weeks:

• No ranking lift that I could connect to the humanizer.

• One article dropped a few spots after I rewrote too much with GPTinf and removed some specific phrases and examples.

Big risk for SEO is this:

Humanizers that paraphrase heavily tend to:

• Drop specific entities and terms.

• Remove unique examples or personal angles.

• Flatten style across posts.

That hurts topical relevance and E‑E‑A‑T signals. You end up with content that looks generic and less tied to your experience. That can affect user behavior metrics, which then feeds back into rankings.

- Client and freelance side

For freelance work, I tested it on deliverables to two long term clients:

• Marketing agency blog posts.

• SaaS help docs for users.

Issues:

• Brand voice got washed out.

• Some subtle claims shifted and needed fact checks.

• Editing time did not go down, I spent extra time fixing tone.

One client asked me to stop using “whatever tool you used on that last draft” after one test batch. So I dropped GPTinf from client work.

- Data and risk

I agree with the concerns about their privacy policy. I would not send client drafts or anything under NDA through GPTinf. For low stakes personal blog posts it is less of a problem, but for freelance work it is not worth the risk.

- Alternative that worked better for me

Clever AI Humanizer did perform better in my tests, both for readability and for detection scores. It did not turn outputs into magic human text, but when I combined it with:

• Shorter AI drafts.

• My own intro and outro written by hand.

• A pass where I add examples from my work or analytics.

Detectors were less aggressive and the posts felt closer to my natural style. If you want an AI text humanizer in your stack, Clever AI Humanizer is the one I would test first right now.

- What I recommend you do

If you want to keep using GPTinf:

• Limit it to light polishing on already human drafts.

• Keep your personal stories and examples untouched.

• Compare one post processed with GPTinf to a purely human edited post in Search Console over a month.

• Run both through GPTZero, Originality, and maybe one more detector, only as a sanity check, not as a goal.

If your key goals are authenticity, lower AI detection risk, and stable SEO, I would:

• Write shorter AI drafts.

• Run them through Clever AI Humanizer or manual edits.

• Add real experience, data, or screenshots that no model has.

• Keep all important terms and entities intact.

For my own sites, GPTinf ended up as a paraphraser I do not open anymore. Manual editing plus a tool like Clever AI Humanizer gave me better and safer results.

Short answer from my side: if your main goals are “feel more authentic,” “trip fewer detectors,” and “not tank SEO,” GPTinf is a pretty shaky bet.

A few angles that did not get hit directly by @mikeappsreviewer and @nachtdromer:

- Authenticity vs “looking human”

GPTinf mostly rearranges words and smooths grammar. It does almost nothing to add what actually reads as “authentic” to real people:

- specific numbers and anecdotes

- clear point of view

- little contradictions and shortcuts in wording

So you end up with text that is technically clean but has that same flat “content farm” vibe. For branding or thought leadership, that is worse than leaving the original AI text and editing it yourself.

- Detector reality check

Detectors are unreliable, but you have to live with them if clients or editors use them. GPTinf is basically fighting the wrong battle. It tries to “shuffle” text, while detectors lean more on pattern and probability. In other words, it is trying to change the wallpaper while the house structure stays the same. That is why you see those 90 to 100 percent AI flags even after processing.

If your boss or client is literally copy pasting into GPTZero, GPTinf is not going to save you in any reliable way. You might get the occasional pass, but it is lottery ticket territory.

- SEO risk that sneaks up on you

The big SEO issue I see with tools like this is not instant penalties. It is slow erosion. GPTinf tends to:

- weaken entity usage

- smooth out uncommon phrases that were actually helpful long tail keywords

- remove “sharp edges” that made the post memorable

Over a bunch of posts, your site turns into “average topical blur.” That hurts user engagement and repeat visits long before you see any kind of manual action or obvious filter.

- Where I actually think GPTinf is OK

I would not use it on:

- client work

- money pages

- anything you want to rank long term

Situations where it is tolerable:

- quick drafts for internal docs

- short answers on low value pages you do not care about

- brainstorming alternate phrasings you will still manually edit

Meaning: use it like a slightly clumsy paraphraser, not a “make this safe from AI detection” button.

- What to do instead if you want to stay efficient

The pattern that works better in practice is:

- Keep AI drafts shorter

- Add your own hooks, examples and opinions

- Only then run a light pass through something like Clever AI Humanizer if you really want another layer

Clever AI Humanizer, in my experience and matching what others reported, tends to keep text closer to natural human rhythm without stripping all the specifics. It is still not magic, but if you are going to lean on a tool, that one is currently the more SEO friendly option.

- Concrete next step for you

Take one live article where you used GPTinf and one where you did your own manual edit instead. Over the next few weeks, watch in Search Console:

- average position

- click through rate

- time on page and scroll depth if you have analytics set up

If the GPTinf version underperforms or stays flat while the manually edited one slowly climbs, that tells you more than any marketing claim or detector score.

TLDR: For real world blogging and freelance work, GPTinf is mostly cosmetic. It will not reliably beat detectors, it can wash out the stuff that helps SEO, and it does not actually make you sound more like you. If you are going to keep a humanizer in the workflow, I would pivot testing time into Clever AI Humanizer plus heavier manual edits instead of trying to force GPTinf to do a job it is not built for.

Short version: GPTinf is fine as a paraphraser but a weak lever for “authenticity,” AI detection, and long term SEO. You are better off treating it as a minor helper, not the core of your workflow.

A few angles that build on what @nachtdromer, @sognonotturno and @mikeappsreviewer already tested:

- On “authenticity”

Where I slightly disagree with the others: I do think GPTinf can make very rough non native drafts feel cleaner for casual readers. If your baseline is clunky grammar and repetitive phrasing, it can make text less painful. The problem is that this is surface level polish. Authenticity in blogging and freelance work usually comes from:

- specific story or data

- strong stance

- little imperfections in how you explain things

GPTinf tends to sand those edges off. That might make client copy safer, but it also makes it forgettable. In practice, you risk trading “a bit rough but memorable” for “smooth and generic.”

- AI detectors in client reality

Everyone above already showed that GPTinf does not reliably drop GPTZero or Originality scores. I will add one nuance. Detectors tend to be more forgiving when:

- the structure of the article is unusual for the niche

- you include screenshots, custom tables or code snippets

- you insert short, sharp sentences that break the model rhythm

GPTinf will not help you with any of that because it barely touches structure. It just massages sentences. If your editor is copy pasting into a detector, your safest move is still:

- shorter model drafts

- your own intro and conclusion

- a light pass with a tool after you add personal bits

Clever AI Humanizer generally fits better in that last slot.

- SEO effects that show up later

The others already nailed the “flattening” problem. I will push it one step further. For sites chasing topical authority, the risk with GPTinf is that it keeps reusing safe general vocabulary across many posts. Over dozens of articles that can:

- dilute internal topical clusters

- reduce co occurrence with important entities

- hurt dwell time because everything reads like the same article with different headlines

If you must use it on live posts, keep it away from:

- key comparison pages

- case studies

- high performing evergreen guides

Use it, if at all, on low tier supporting content where a slight loss of uniqueness is not a big deal.

- Where GPTinf can be strategically useful

It is not completely useless. I have seen it work reasonably well in these narrow roles:

- Quickly standardizing tone across a batch of cheap guest posts that you do not care about long term.

- Cleaning up rough internal documentation drafts.

- Helping non writers get from “hard to parse” to “acceptable” before you do a real edit.

The catch is that none of those use cases justify paying for it specifically as an AI detector bypass or SEO booster.

- Clever AI Humanizer vs GPTinf in practice

Since people keep bringing up Clever AI Humanizer, here is a more tactical comparison from my side:

Pros for Clever AI Humanizer

- Tends to keep more of your specific phrases and examples intact, which is good for long tail keywords.

- Rhythm usually feels closer to an actual rushed human writer. That alone can soften detector scores and client suspicion.

- Plays nicer with partial human drafts. If you write the hook and key examples yourself, it does not completely wash them out.

Cons for Clever AI Humanizer

- Still not plug and play. If you skip a final human pass, you will occasionally get odd transitions or shifts in tone.

- Can slightly inflate word count with filler if you are not careful, which is bad for lean technical content.

- None of these tools are transparent about long term data handling, so treat it as untrusted for sensitive or under NDA material.

Compared to GPTinf, Clever AI Humanizer is more useful when your goal is “make this sound like my usual blog voice without wrecking my SEO terms.” GPTinf is more like a safer Grammarly with marketing claims.

- How I would structure your workflow

Instead of repeating the detailed test setups others shared, here is a simple split that keeps risk low:

Use GPTinf only when:

- the text is low stakes

- you only need basic clarity

- you are fine if it stays obviously AI to detectors

Use Clever AI Humanizer when:

- you already injected your own experience or data

- the piece matters for branding or conversions

- you are going to read the whole thing line by line afterward

Skip both and edit by hand when:

- it is client work tied to contracts

- the page is a money keyword or cornerstone article

- you rely on that content to demonstrate real expertise

If you compare results over a month in Search Console between a GPTinf touched post and a similar manually edited or Clever AI Humanizer assisted post, watch not just rankings but also click through and average engagement. The subtle drop in user behavior is usually where GPTinf’s “flattening” shows up first.

Bottom line: GPTinf is not “hurting SEO” in the sense of penalties, but it quietly weakens the very signals you want for authority and engagement. For real world blogging and freelance work, it is a tool you keep at the edge of the toolbox, not in the center.