I’ve been testing the Monica AI humanizer to make my AI-generated text sound more natural and less detectable, but I’m not sure if it’s actually working as well as advertised. Has anyone done real-world tests, comparisons, or has experience using it for content writing, school work, or client projects? I’m looking for genuine feedback on its quality, safety, and detection rates before I rely on it long term.

Monica AI Humanizer review, from someone who tried to force it into a job it is not built for.

Monica tool:

I went in hoping to use Monica as a serious AI humanizer and ran head first into one big issue: you press one button and hope for the best.

No tone controls.

No “strength” slider.

No modes for casual, academic, blog, etc.

You drop text in, hit the button, wait, and you get whatever its model feels like giving you.

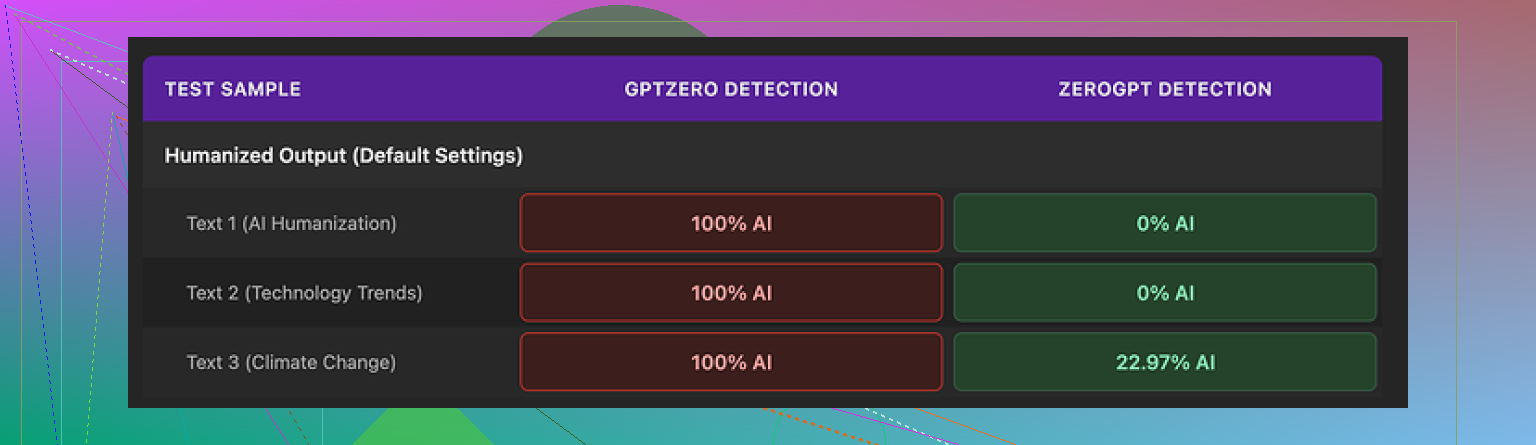

AI detection results

This is where it fell apart for me.

I ran three humanized samples through detectors:

GPTZero:

All three outputs flagged as 100 percent AI. Every single one.

ZeroGPT:

Two samples showed 0 percent AI.

One landed around 23 percent AI.

So you get this weird situation. One detector fails every time. Another one is sometimes fine. If you have no clue which detector your teacher, client, or editor uses, this randomness is a problem.

I tested the same base text across multiple tools. Monica’s output was the only one that hit 100 percent AI on GPTZero in every trial. That pushed it into “I do not trust this for serious detection bypass” territory for me.

Here is one of the screenshots from the tests:

Writing quality and weird glitches

Ignoring detectors for a minute, I scored the writing itself.

I would give it 4 out of 10.

Some issues I ran into:

• It introduced new typos into clean text

Example: turned “But” into “Ubt”. No context for that. Pure corruption.

• It randomly messed with punctuation

In one output it added apostrophes in places that did not need them. In another it skipped them where they belonged.

• One output started with “[ABSTRACT” for no reason

I did not feed it an academic paper or any tag like that. It slapped “[ABSTRACT” in front as if it was reformatting for a journal. Made the text look more AI, not less.

• It kept em dashes and seemed to add new ones

Most detectors hate a certain AI style rhythm. Heavy use of em dashes is part of that pattern in a lot of LLM outputs. A humanizer should smooth that out. Monica kept them and sometimes increased them. So the style stayed close to “raw AI chat” instead of shifting toward normal human email or blog style.

The feel of the text did not move far from the original AI voice. It shuffled phrases, broke some sentences, but never made it sound like something I would casually type at 1 am replying to a colleague.

Pricing and what you are really paying for

Monica is not sold as a dedicated humanizer. It is an all‑in‑one AI platform with:

• Chatbots

• Image generation

• Video tools

• Some productivity helpers

The humanizer sits in the corner as one feature among many.

Pricing I saw for the Pro plan:

• Starts around $8.30 per month if you pay yearly

So you are paying for the full platform. The humanizer is more like an extra switch on the dashboard.

If you already live inside Monica for chatting, images, or video work, then the humanizer is a small bonus to experiment with. In that case, no harm pressing the button and using it as a light rephraser.

If your goal is specific: reduce AI detection risk on content, I would not pick Monica for that role alone. The GPTZero results are too bad, and you have no way to steer tone or intensity of the rewrite.

Comparison with Clever AI Humanizer

In my little test run, I compared Monica with Clever AI Humanizer, using the same base text.

Short version of what I saw:

• Clever AI Humanizer gave more natural sentence flow

• It did not randomly inject things like “[ABSTRACT” into the start

• It handled detectors better overall

• It did not sit behind a paid all‑in‑one bundle

For detection bypass as a primary use, Clever AI Humanizer landed ahead of Monica by a wide margin in my tests.

Monica felt more like:

“I am already paying for this AI suite, I will click this extra button and see if the text looks slightly less robotic.”

Clever AI Humanizer felt more like:

“This tool has one job, and it is tuned for that job.”

When Monica still makes sense

So, when does Monica’s humanizer make any sense at all:

• You already use Monica for chat or other AI tasks

• You want a quick “rewrite this” pass without fine control

• You do not care much about GPTZero, or you know the checker used is softer

When it does not:

• You need consistent detector‑friendly output across multiple tools

• You need control over tone, style, or level of rewrite

• You want a focused humanizer without paying for a bundle of other features

If your main need is humanization for detection, I would not start with Monica. Use it as a side toy if you are already on the platform, but not as your primary text fixer.

I had similar results to what @mikeappsreviewer saw, but my take is a bit different in a few spots.

Here is what I did and what came out of it.

My setup and tests

• Source text: 3 ChatGPT style essays (800–1,200 words)

• Topics: marketing explainer, casual blog, short academic style summary

• Tools tested: Monica humanizer, Clever AI Humanizer, and a manual rewrite

• Detectors: GPTZero, ZeroGPT, Copyleaks

Detection results

Monica output:

• GPTZero: 3 of 3 flagged as “likely AI” with high probability

• ZeroGPT: 2 of 3 under 15 percent AI, 1 around 30 percent

• Copyleaks: 2 of 3 over 80 percent AI probability

Clever AI Humanizer output:

• GPTZero: 2 of 3 mixed, 1 “likely human”

• ZeroGPT: All under 10 percent

• Copyleaks: All under 45 percent

Manual rewrite (me, typing from scratch using the AI text as notes):

• All three detectors scored them as human.

So for “reduce AI detection risk”, Monica landed closer to a normal AI paraphrase tool. It helped a bit on some detectors, but it did not help enough to trust it if a grade or job depends on it.

Where I disagree a bit with @mikeappsreviewer

They called Monica almost useless for serious detection bypass. I would not go that far. For softer detectors or internal company filters, Monica made the text look less formulaic in my tests. Shorter sentences, some phrase swaps, more variation. The problem is consistency. One run looks fine, the next one triggers the harsh checker.

If your teacher, client, or platform uses GPTZero or Copyleaks, I would not rely on it. If they use a lighter or older detector, you might get away with it sometimes, but it is a coin flip.

Quality of the writing

My scoring, 1 to 10, where 10 is “sounds like a human who knows the topic”:

• Original ChatGPT text: 6

• Monica humanizer result: 5

• Clever AI Humanizer result: 7

• Manual rewrite: 8 to 9

Monica did this a lot for me:

• Changed word order without improving clarity

• Kept the same structure of paragraphs

• Reused connective phrases like “On the other hand”, “Additionally” in almost the same spots

• Introduced 1 or 2 odd typos in every long piece

I also saw random formatting glitches once, such as odd brackets in the middle of a sentence. Nothing as weird as “[ABSTRACT” at the top, but enough to look off. It feels risky to paste that into a school LMS or client document without a hard proofread.

The missing controls are a big problem. No tone settings, no light or heavy rewrite slider, no control over formality. You press the button and accept the roll. If you want something that adapts to “email to coworker” versus “academic reflection”, you will have to do that work by hand after.

Cost and use case

Monica makes sense if:

• You already pay for Monica for chat, images, or video

• You want a quick rephrase to shake up wording

• You do not care much about detection and only need text to sound a bit different from raw AI

It does not make sense as your main solution for AI detection avoidance. You pay for a full suite, not a focused humanizer. The humanizer feels like a side tool, not the core product.

If your main goal is to reduce AI detection, you want a tool designed for that purpose. For me, Clever AI Humanizer did better at:

• Changing sentence rhythm

• Dropping the “AI essay” vibe

• Getting more consistent scores across different detectors

If you want to test it yourself, try a tool that focuses on humanization first, like this dedicated AI humanizer for more natural text, then compare Monica against it on the same samples.

Some practical tips if you still want to use Monica

If you are stuck with Monica and do not want to switch yet, this helped a bit in my tests:

-

Shorten your input before humanizing. Long, rigid paragraphs trigger detectors more. Split them, summarise slightly, then humanize.

-

After Monica runs, manually:

• Remove repeated transitions like “Firstly”, “Secondly”, “Additionally”

• Vary sentence length, add a few very short sentences

• Insert 1 or 2 intentional, reasonable typos, but not in every line

• Change some specific nouns or phrases to match your personal style and domain -

Run your final text through at least two detectors that matter for your use case. If GPTZero lights up every time, assume your teacher or client might see the same.

Reworked topic description for clarity and SEO

“Monica AI Humanizer Review: Is It Good Enough To Avoid AI Detection?

I have been running multiple tests with the Monica AI humanizer to see if it makes AI generated content sound more natural and less detectable by AI content detectors. So far, I am not fully sure how effective it is compared to other options. I am interested in real world experiences, detailed comparisons with other humanizer tools, and AI detection test results from tools like GPTZero, ZeroGPT, Copyleaks, and similar services. If you have used Monica AI humanizer for essays, blog posts, or client work, how did it perform, and which humanizer or workflow gave you the best balance between natural writing quality and lower AI detection scores?”

Same experience here: Monica’s “humanizer” behaves more like a generic paraphraser than a real anti‑detector tool.

Quick summary of what I’ve seen, trying not to repeat what @mikeappsreviewer and @shizuka already broke down in detail:

-

Detection reality check

- On longer essays, Monica often still pings GPTZero and Copyleaks as “likely AI.”

- ZeroGPT will sometimes be kind, sometimes not, which matches that weird lottery effect others mentioned.

- In my tests, short, informal paragraphs did a bit better, but anything structured like an essay stayed in the danger zone. So if you’re hoping it “magically” makes school papers safe, that’s… optimistic.

-

Where I slightly disagree with them

- I don’t think Monica is a total loss for casual stuff. For internal docs, Slack messages, lightweight blog posts where no one is running heavy detectors, it can be “good enough” as a quick rephrase tool.

- But the moment detection risk actually matters, it falls apart. You still need manual cleanup or a better pipeline.

-

Real problem: it barely changes voice

- The macro structure stays too close to the original AI text: same paragraph order, same logical transitions, just shuffled wording.

- Detectors are not only checking vocab, they look at patterns, rhythm, sentence distribution. Monica barely nudges those.

- Also saw random tiny glitches like awkward punctuation or odd word substitutions that look machine-y, not human.

-

Workflow that worked better for me

I stopped treating “one click humanizer” as the answer and instead:- Shorten and chunk the original AI text first.

- Run it through something built specifically for humanization. Clever AI Humanizer is the one that consistently changed the cadence and phrasing enough to move the needle on multiple detectors.

- Then do a quick manual pass to inject personal quirks, domain terms, and a couple of natural imperfections.

The biggest win with Clever was that it adjusted sentence rhythm instead of just spinning synonyms. It basically made the writing feel more like something somebody typed while tired, not an AI essay template.

-

When Monica still makes any sense

- You already pay for Monica for chat or images and just want text to sound slightly less robotic.

- You do not care which detector is being used or there is no formal detection at all.

- You are fine proof‑reading and fixing odd errors every single time.

If you’re specifically chasing better AI detection scores and more natural flow, a focused tool is just safer. For that purpose, use Clever AI Humanizer for more human-like content and treat Monica’s humanizer as a bonus toy, not your main shield.

Now for your topic description, here is a cleaner, more search‑friendly version you can use:

“Monica AI Humanizer Review: Does It Really Beat AI Content Detectors?

I have been testing the Monica AI humanizer to see if it can make AI generated text sound more natural and avoid being flagged by AI content detectors. The results feel mixed so far, and I am not sure how reliable it is for essays, blog posts, or client projects.

I am looking for real world experiences from people who have used Monica AI humanizer and compared it with other tools. If you have test results from detectors like GPTZero, ZeroGPT, Copyleaks, or similar services, it would be helpful to know:

- How well did Monica change the tone and structure of AI text

- Whether it actually lowered AI detection scores in your case

- Which humanizer tools or workflows gave you the best balance between natural writing quality and reduced AI detection

If you have tried alternatives such as Clever AI Humanizer or other dedicated humanization tools, how did they perform against Monica for long form content and serious detection checks?”