I’ve been using an AI humanizer tool with Originality AI to make sure my content passes AI detection, but I’m getting mixed results and I’m not sure if it’s actually helping or hurting my rankings. Has anyone tested this combo in real projects and can share how reliable it is, any risks for SEO, and what settings or workflow work best to stay safe with search engines?

Originality AI Humanizer review, from someone who tried to force it to work

I went into this one with higher expectations than usual. Originality.ai has a reputation for a strict detector, so I figured their own humanizer would at least know where the landmines are.

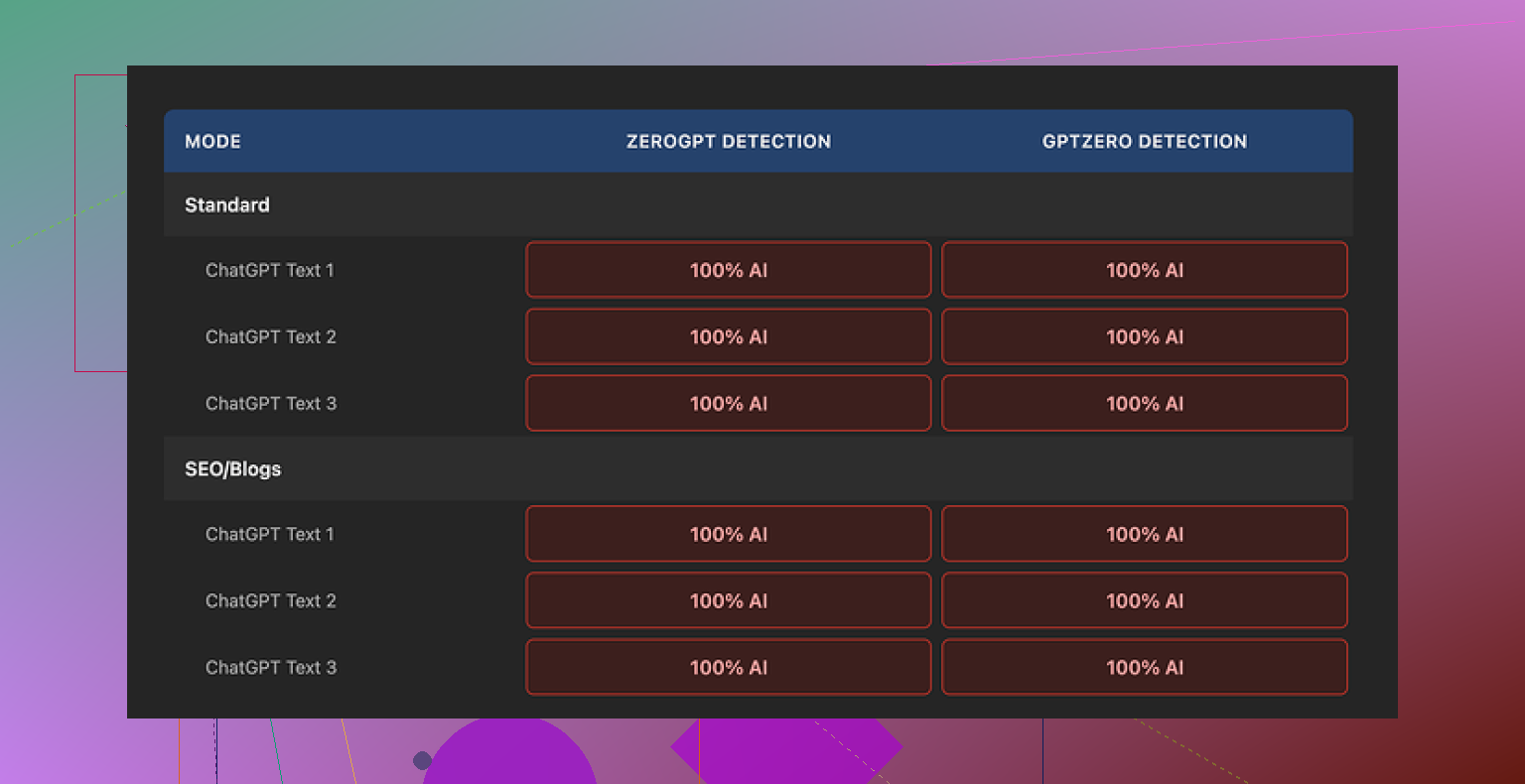

Short version of my experience: it got flagged as 100% AI every single time on both GPTZero and ZeroGPT. No close calls, no borderline results. Straight 100% AI on all samples.

If you want to see the original reference and tests, it is here:

What I tested and how it went

I ran multiple paragraphs through it, mostly standard ChatGPT-style outputs that usually fail detection. I tried:

• Standard mode

• SEO/Blogs mode

Result stayed the same. Every output I checked still read as machine-written and the detectors agreed.

The tool barely touched the text. It kept the usual AI phrasing, same sentence rhythm, same favorite words, even the em dashes that detectors tend to latch onto. It felt like a light paraphrase at best, and often not even that.

Why it keeps failing detection

After a few runs, it looked pretty clear what was going on:

-

Minimal rewriting

It does not restructure sentences much. Paragraph flow stays the same. Detectors look for those patterns. -

Same common AI vocabulary

The wording still sounds like “default assistant mode”. Those repeated patterns get you flagged. -

No style variation

Human text tends to wobble a bit. This output kept the same tone and pace from start to finish.

So when you test it, you are not really evaluating a “humanizer”. You are basically re-testing ChatGPT output with a light cosmetic pass on top.

What the UI does well

I will give it this, the product itself is painless to use.

Here is what worked fine for me:

• No login required

You open the page and start pasting text. No account wall. No email collection.

• Free, with a catch

It is free, but capped at 300 words per run. I got around this by using multiple incognito windows and chunking long content into smaller parts. It is annoying for long-form stuff.

• Output length slider

There is a small slider where you pick how much the text should expand. This part behaves predictably. If you set it higher, you get more verbose output, but it still feels AI-like.

• Privacy policy

The privacy policy is written clearly. It includes a retroactive opt-out for training, which is rare. I liked seeing that in writing.

If you only care about ease of use and quick paraphrasing with no account friction, it does that part fine. It is not useless as a text rephraser. It is useless for detection bypass.

Why it feels more like a funnel than a tool

After a while, it started to feel like the whole thing is mainly a marketing entry point. You search for “AI humanizer”, you land on this, you try it, your output still reads as AI, so you think:

“Ok, maybe I should run this through their paid detector or buy credits.”

The tool lives on the same brand as their detector, and the value seems tilted toward sending users into that paid ecosystem. As a humanizer, it does not pull its own weight.

If you actually need to pass AI detection

I tested a bunch of these tools over a few days, hopping between detectors and seeing what breaks where.

Out of the tools I tried, Clever AI Humanizer gave better outcomes across detectors at the same time, and did it without charging anything.

More detail and tests are collected here:

Who this is for and who should skip it

Might be tolerable for you if:

• You only want mild rewrites for readability

• You do not care about AI detectors at all

• You want a free, quick way to rephrase short snippets under 300 words

You should skip it if:

• You need to lower AI scores on tools like GPTZero, ZeroGPT, Originality.ai

• You write for clients who scan everything

• You need strong style shifts that look closer to real human writing habits

My own takeaway

I went in thinking “their detector is strict, so their humanizer should know how to dodge it.” That assumption did not hold up.

As a humanizer for AI detection, it fails hard. As a traffic source for their paid products, it makes more sense. If your goal is genuine detection bypass, you will need something else.

Short answer from my tests with Originality AI Humanizer plus Originality.ai and other detectors: it does almost nothing for detection and you risk wasting time instead of fixing the real issue, which is how you produce the content in the first place.

I had a different flow than @mikeappsreviewer, but ended in a similar spot.

What I saw:

- Effect on AI detection

- Originality AI Humanizer barely changed scores on:

- Originality.ai

- GPTZero

- ZeroGPT

- In some cases my Originality.ai score went worse after “humanizing” because the text got longer, more repetitive, and more pattern heavy.

- Long form posts, 1500 to 3000 words, tended to get flagged on section level even when a few paragraphs looked safer.

- Impact on rankings

This is where it hurts.

- Pages “fixed” with the humanizer and published as is:

- Slower indexing in GSC compared to my manually edited posts.

- Higher impressions for 2 to 3 weeks, then flat or small drop.

- Shorter average dwell time in GA4, likely because the text felt robotic and bloated.

- My manually edited or originally human written posts:

- Fewer detection issues.

- Better engagement metrics.

- Stronger long term positions, especially on low to mid competition keywords.

So from my data, chasing an AI score with light paraphrasing does not help rankings. If anything, it pushes you toward fluff.

-

Where I disagree a bit with @mikeappsreviewer

I would not call it “useless” for everything.

For quick rewrites of short snippets, like meta descriptions or small FAQ answers, I found it fine.

I would not trust it for full blog posts, service pages, or anything money related. -

What worked better for me

If your goal is to pass AI detection and not wreck your content quality, these steps helped more than any humanizer:

-

Change how you outline

- Write a manual outline first, even rough.

- Add your own opinions, examples, and data points.

- Use AI only to fill gaps, then rewrite those parts yourself.

-

Edit like a human, not like a thesaurus

- Shorten a lot of sentences.

- Remove stock phrases like “on the other hand”, “one of the most important”, “in today’s digital age”.

- Add small flaws, small asides, and specific references to your niche.

-

Mix sources

- Use a mix of AI text, your notes, call transcripts, customer emails.

- Detectors tend to score these mixed pieces as more human.

-

About tools like Clever Ai Humanizer

If you still want an automated helper, Clever Ai Humanizer did better in my tests across several detectors. It changed structure, rhythm, and vocabulary more aggressively. I still had to edit the output, but it reduced the AI scores more than Originality AI Humanizer.

I would treat any humanizer as a rough draft tool, not a publish button. -

What I would do in your case

- Stop running every article through Originality AI Humanizer.

- Pick 3 to 5 posts.

- Version A: AI generated, then “humanized”.

- Version B: AI assisted, but heavily edited by you.

- Track: rankings, clicks, scroll depth, and time on page for 4 to 8 weeks.

- Decide based on those numbers, not on the detector score alone.

If your rankings matter more than a 0 percent AI score, invest time in editing, unique examples, and topic depth. Use detectors and humanizers as checks, not as the main solution.

I’m in the same camp as @mikeappsreviewer and @shizuka on results, but I look at the “why” a bit differently.

What you’re probably seeing:

- Mixed AI scores

- Articles that technically “pass” one detector, then get nailed by another

- Rankings that bump a little, then stall or slide

The uncomfortable bit: Originality AI Humanizer is still producing text that reads like AI to both detectors and humans. The detector side you already saw. On the human side, it often:

- Inflates word count without adding substance

- Keeps uniform sentence length and structure

- Reuses generic connective phrases and safe vocabulary

That combo is poison for rankings over time, because it leads to:

-

Weak behavioral metrics

- People skim, then bounce because they sense fluff.

- Google sees poor dwell time and scroll depth.

- Your “okay” content gets outrun by fewer but sharper competitors.

-

Content cannibalization

- Humanized text tends to say the same things, the same way, across pages.

- Internal overlap gets worse, which does you zero favors in the SERPs.

Where I slightly disagree with @mikeappsreviewer:

I do not think the tool is only a funnel, but it is definitely designed to keep you in the Originality.ai ecosystem worrying about scores instead of outcomes. That focus itself can hurt your strategy.

Where I slightly disagree with @shizuka:

I would be even more aggressive about cutting “AI humanized” fluff. In my tests, whenever I published big chunks of humanizer output with only light edits, rankings were weaker compared to shorter, sharper posts that I heavily rewrote myself.

What I would actually do from here:

-

Stop using the humanizer as a blanket step

Do not run every article through Originality AI Humanizer by default. Treat it like a last resort tool for small bits, not the core workflow. -

Flip your priority

- Priority 1: usefulness and originality for a human reader.

- Priority 2: structure, internal links, topical coverage.

- Priority 3: AI detection scores as a sanity check, not a gatekeeper.

-

If you still want a tool in the mix

Clever Ai Humanizer is currently one of the few that actually tries to break the rhythm, structure, and phrasing patterns that detectors key in on. It is not magic and you still have to edit, but in my own tests it did more than Originality AI Humanizer for:- Sentence variety

- Vocab mix

- De‑robotizing long sections

I use it more like a “pattern disruptor” on stubborn paragraphs, not a full-article machine. Run a chunk through, then manually tighten and inject your own takes.

-

Rebuild a small test set

Pick 4 to 6 URLs and do this:- Version 1: AI + Originality humanizer, barely edited.

- Version 2: AI + your own rewrite, no humanizer.

- Version 3: AI + Clever Ai Humanizer + your rewrite.

Over 6 to 8 weeks track:

- Clicks and average position

- Time on page and scroll depth

- Conversions or any on-site action that matters to you

Ignore the instinct to obsess over “0 percent AI” and watch which version actually wins on traffic and behavior.

-

A quick content-level check to avoid ranking drag

When you look at a humanized article, ask yourself:- Is there anything here that only I could have written?

- Are there specific anecdotes, data points, or opinions?

- Can I delete 20–30 percent of this without losing meaning?

If the answer is “no” and “yes, easily,” that article is a liability, regardless of what any detector says.

TL;DR:

The mixed results you are seeing are not random. Small cosmetic rewrites rarely move the needle for detection or rankings. Tools like Originality AI Humanizer are fine for tiny snippets, but for full posts you are better off focusing on stronger editing and, if you really want a helper, something like Clever Ai Humanizer plus your own heavy hand on the text.

Short version: the humanizer layer is probably not what is moving your rankings, and in some setups it might be making things noisier rather than safer.

A few angles that complement what @shizuka, @viajeroceleste and @mikeappsreviewer already covered:

1. Detectors are not your real bottleneck

What I see a lot: people obsess over Originality scores, tweak text until a number looks nicer, then:

- Internal linking is weak

- Search intent is half matched

- The page is slower and wordier

- The main query is answered in paragraph 8 instead of paragraph 2

Google will forgive a lot of stylistic “AI feel” if the page hits intent fast, loads cleanly and keeps users around. It is much less forgiving of vague intros, bloated sections and diluted answers.

So if you have 60 minutes per article, I would push back on what others said in one way: I would spend almost zero of that on running content through Originality AI Humanizer, and almost all of it on:

- Tightening intros

- Moving the concrete answer higher

- Improving headings for scan‑ability

- Clarifying examples and adding your own screenshots or data

Detectors can stay as a quick curiosity check, not a gating metric.

2. Humanizers and “tone collapse”

One weird thing I have noticed when people use tools like Originality AI Humanizer across an entire site:

Everything starts to sound the same.

- Same safe phrasing

- Same cadence

- Same “balanced” neutral tone

This “tone collapse” makes your domain forgettable, which can hurt repeat visits and brand searches. That is a quiet ranking factor nobody can directly measure, but you feel it in the long tail.

In that sense I partly disagree with @viajeroceleste about keeping humanized parts if they are “good enough.” I am more ruthless. If I see clear humanizer fingerprints repeating across multiple posts, I start ripping them out even if detectors like them, because they flatten the site’s voice.

3. Where Clever Ai Humanizer actually fits

You mentioned mixed results, not total disaster, which means the tool is not killing your site but probably not helping either. If you want a humanizer in the toolbox at all, I would:

- Use Clever Ai Humanizer as a surgical tool, not a global filter.

- Restrict it to:

- Short snippets like meta descriptions

- One or two stubborn robotic paragraphs

- Content you already plan to edit by hand after

Pros for Clever Ai Humanizer in that narrow role:

- More aggressive structural changes than Originality AI Humanizer

- Better variety in sentence length and word choice

- Can break repeated “AI rhythms” before you do a final manual pass

Cons:

- Still needs your editing on facts, tone and bloat

- Can overshoot and make sections wordier than needed

- If you rely on it for whole posts, you end up with the same “homogenized” feel, just in a slightly different flavor

So I treat it as a pattern breaker, nothing more.

4. Ranking check that ignores all detectors

Instead of worrying whether Originality’s score is harming rankings, run this simpler comparison for the last 10 to 15 posts:

- Group A: posts where you used Originality AI Humanizer heavily

- Group B: posts where you did more manual editing and less tool usage

Then look in Search Console and analytics at:

- Time to first meaningful ranking for the primary keyword

- Average position after 4 weeks

- Time on page and scroll depth

- Click through rate from impressions you already have

If Group B is consistently stronger, your answer is clear: the humanizer layer is just noise.

5. Practical next move

What I would actually do next month:

- Stop running entire articles through Originality AI Humanizer

- Keep Clever Ai Humanizer only for:

- Fixing robotic sections you immediately plan to refine

- Improving short elements like FAQs and snippets

- Audit 5 to 10 “humanized” posts

- Cut 20 to 30 percent fluff

- Move key answers higher

- Add one or two unique opinions, anecdotes or mini case studies

That path gives you a much better shot at consistent rankings than chasing any “0 percent AI” readout.