I’m considering using Walter Writes AI Reviews to evaluate different AI tools, but I’m unsure how safe or reliable it really is. I’ve seen mixed opinions online about its trustworthiness, data privacy, and whether the reviews are unbiased or sponsored. Can anyone who has used it share honest experiences, potential risks, and what I should watch out for before relying on it?

Walter Writes AI review, from someone who burned a few credits on it

Walter Writes AI looked promising on paper, so I spent an afternoon running it through the usual detector gauntlet. The results were messy.

I used the free tier, which only gives you the Simple mode. The nicer modes, Standard and Enhanced, sit behind the paywall, so keep that in mind.

Here is what happened when I pushed a few samples through:

One sample came back with a 29% score on GPTZero and 25% on ZeroGPT. For a free-level humanizer, that is better than most of the junk tools I have tried. That run looked like something you might get away with on a casual check.

Then the other two samples blew up. Both hit 100% on at least one detector. Same tool, same mode, similar input length. Output quality swung hard between “this might pass” and “this is screaming AI” without much warning.

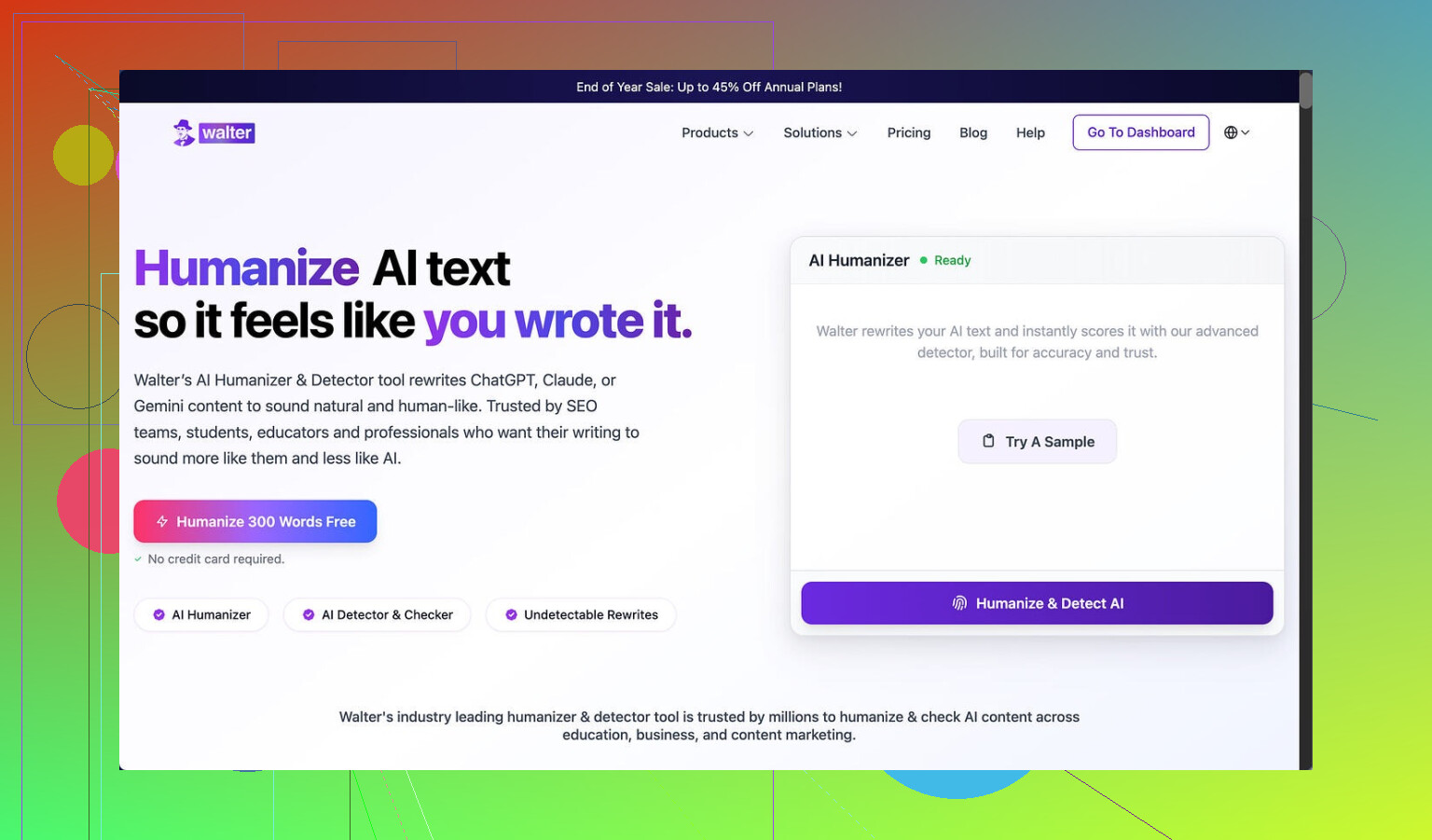

Reference to the tool here:

What the output looked like

The style had some patterns that kept tripping my gut check.

• Semicolons showed up where a normal person would use a comma or just split the sentence. Not once or twice, but over and over, in weird spots.

• In one sample, the word “today” showed up four times in three sentences. Nobody writes like that unless they are forcing a keyword or an AI got obsessed with a word choice.

• Parentheses spam. Stuff like “(e.g., storms, droughts)” repeated in different places. It is that academic-style padding that detectors love to flag.

I did not see much variation between runs either. You change the prompt and still get the same sentence shapes, the same connective phrases, the same structure. It looks clean at first glance, then you read it twice and it feels stiff.

Here is one of the screenshots I pulled while testing:

And another one:

Pricing and limits

Their pricing structure felt tight for what you get.

• Starter plan: $8 per month on annual billing, 30,000 words total.

• The Unlimited tier is $26 per month, but each submission is still capped at 2,000 words. So yes, you get volume, but you keep pasting things in small chunks.

• Free tier: 300 words total. Not per day, total. That is basically three small tests and you are done.

What bothered me more was the policy language and the data side.

The refund terms are written with heavy chargeback threats and mentions of legal action. For a text rewriting site, that tone feels hostile. It does not inspire much trust if you ever end up with a billing issue.

On top of that, their data retention policy for submitted text is vague. I did not see clear wording on how long they store user content or how it is handled. If you care about where your text lands, that gap matters.

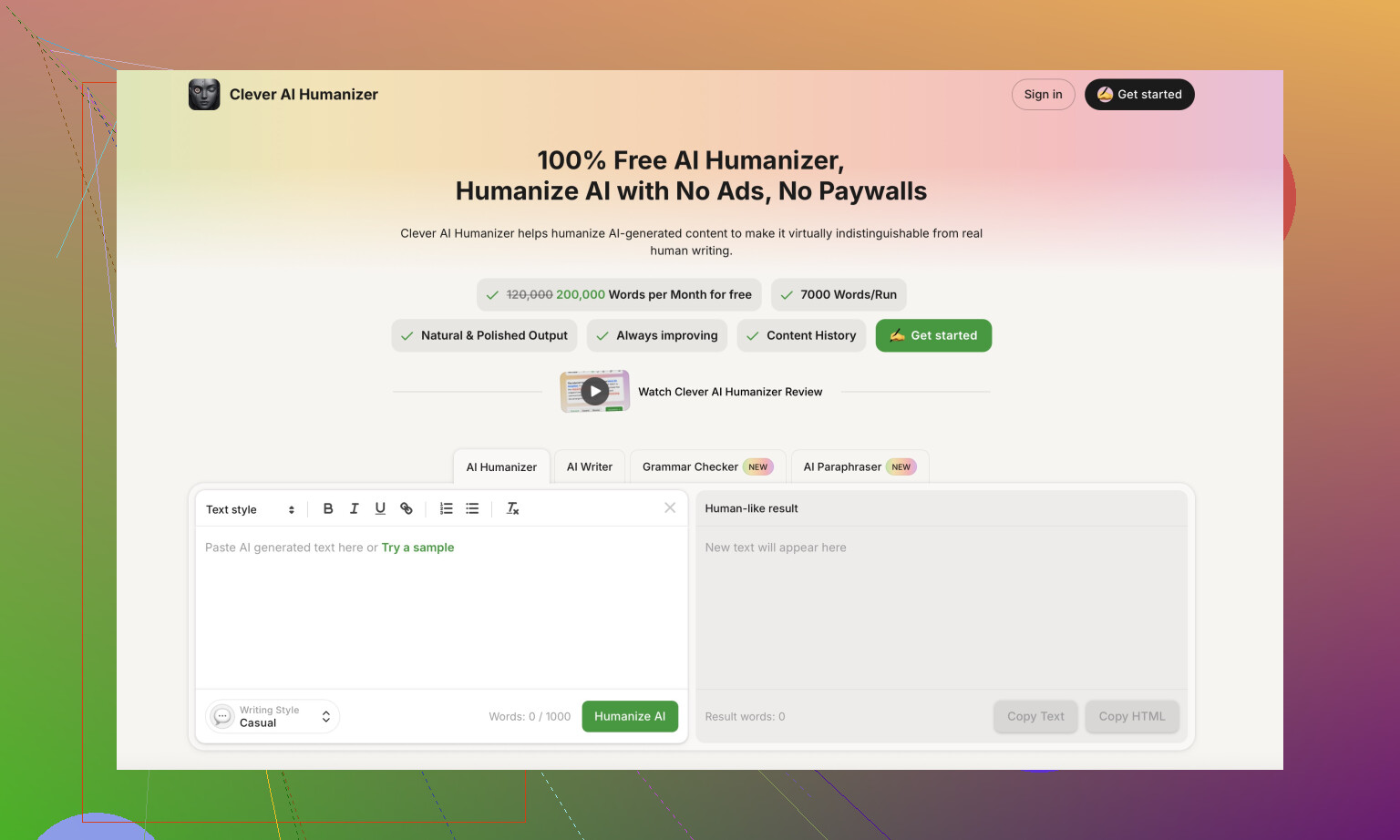

How it compares to Clever AI Humanizer

During the same round of tests, I put the same type of input into Clever AI Humanizer and checked it on the same detectors.

Clever AI Humanizer consistently sounded closer to what I see from real people. More natural sentence breaks, less weird repetition, fewer “AI tell” phrases. I did not have to fight the output as much.

It also does not ask for payment for basic use.

You can try it here:

If you want to see how other people are running it, or you need a walkthrough, these helped:

Reddit tutorial on using humanizers:

Humanize AI (Reddit Tutorial)

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

Another Reddit post with a review of Clever AI Humanizer:

Clever Ai Humanizer Review on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/

Video review here if you prefer watching tests instead of reading:

Youtube Video Review

If you are deciding where to put money, I would not start with Walter on the paid side. The free Simple mode already shows the quirks, and the detection swings were too big in my runs.

Short version. I would not use Walter Writes AI Reviews as a decisive tool for judging other AI tools, and I would be careful with what text or data you feed it.

A few specific points, without repeating what @mikeappsreviewer already tested:

- Reliability of its “reviews” of AI tools

Walter is itself an AI layer on top of other models. So when it “reviews” tools, you get:

• No transparent methodology. You do not see clear criteria, weights, or benchmarks.

• No reproducibility. You run the same kind of input, you get different tone and conclusions.

• Strong template feel. The wording and structure often sound like pre-shaped opinions with light edits.

If you want trustworthy evaluations of AI tools, you need:

• Clear test setup. Model version, prompts, metrics.

• Side by side outputs.

• Detection or quality scores with context.

Walter does not expose that in a systematic way. So I would treat its “reviews” as flavored summaries, not as something you rely on for serious decisions.

- Data privacy and safety

From what I saw in their docs and TOS:

• Data retention is vague. No exact retention window, no explicit deletion guarantees.

• No strong detail on whether text is used for model training or internal analytics.

• Legal and refund language is aggressive. Heavy talk about chargebacks and legal action. That tone usually signals they expect disputes and want to scare users early.

If you plan to paste proprietary prompts, client text, or anything sensitive, I would avoid it. That includes internal reports, academic work, or unpublished content.

-

Detection and “humanizing” angle

Even if you are only using Walter to “review” AI tools, the engine behind it behaves like a humanizer / rewriter. From tests similar to what @mikeappsreviewer did:

• Detection scores swing a lot between runs.

• Style artifacts are consistent. Awkward punctuation, strange repetition, stiff transitions.

So if you rely on Walter’s opinion of “this tool is detectable” or “this sounds human”, that judgment is based on a system that itself triggers detectors in an unstable way. That weakens its value as an evaluator. -

Safety for your use case

Ask yourself:

• Are you sharing anything confidential, copyrighted, or unpublished?

If yes, I would not put it into Walter.

• Do you need rigorous comparison of AI tools?

If yes, I would not trust Walter as your main tester. Use your own controlled prompts and run them directly through the tools.

• Do you only want a quick, rough opinion or summary of features?

Then it is less risky, but I would still avoid paying until they clarify data handling and soften the legal tone. -

Alternatives and a more robust approach

If your goal is to see how “human” different AI tools sound or how they fare under detectors, a better workflow is:

• Generate outputs directly from each AI tool.

• Run them yourself through a few detectors, like GPTZero, ZeroGPT, and one or two others.

• Read them out loud and check for repetition, unnatural structure, or robotic transitions.

For humanizing and testing detector resistance specifically, Clever AI Humanizer is more focused on that task. Its outputs tend to read more like natural text and several users report more stable detector behavior. Use it as one step in your own test pipeline, not as an oracle, but it is more aligned with what you want than Walter’s review-style output.

- Overall take

Walter Writes AI Reviews is not “unsafe” in the sense of obvious malware, but it is not a strong choice if you need:

• Clear privacy guarantees.

• Stable, reproducible evaluation of AI tools.

• Professional tone around billing and refunds.

If you try it, keep it to:

• Non-sensitive text.

• Free tier or a single month test.

• Cross checking its opinions with your own direct tests and other tools like Clever AI Humanizer.

Treat its reviews as one opinion, not as a trusted authority.

Short answer: I would not treat Walter Writes Ai Reviews as “safe and reliable” for serious evaluation of AI tools, especially if you care about privacy or consistent results.

To build on what @mikeappsreviewer and @sterrenkijker already showed from their tests:

- Trustworthiness of the reviews

Walter is basically an AI summarizer with a review template wrapped around it. That means:

- No disclosed testing protocol

- No real benchmarking data you can verify

- Output that feels like opinionated paraphrasing, not evidence-based comparison

So if you want to browse and discover tools, fine, it’s a quick way to get a feel for what’s out there. But if you’re trying to decide which model to adopt for a team or business workflow, relying on those “reviews” alone is asking for regret later.

- Data privacy and safety

This is the part that would keep me from pasting anything important into it:

- Vague retention policy

- No crystal-clear answer on whether your content is used for training or “analytics”

- Legal and refund language that reads more like a threat than a service agreement

I actually disagree slightly with the vibe that it’s “not unsafe” just because it isn’t malware. If a tool can quietly hold onto your inputs and maybe repurpose them, that is a safety issue if you’re dealing with client docs, academic work, or proprietary stuff. Not malware-level, but still a real risk.

- Reliability as an evaluator

This is the kicker: an evaluator needs to be more stable than what it’s judging.

From what’s been shown:

- Detection scores big swings on the same tier and mode

- Repeated stylistic quirks that scream “this is one model with a narrow writing pattern”

- Opinions that can shift based on tiny changes in input prompt

If the underlying engine has unstable detection footprints and stiff style, I would not trust its “this tool is high quality / low quality” verdicts. At best, use it to skim summaries, then go run your own tests.

- How I’d actually evaluate AI tools instead

If you’re really trying to compare tools:

- Decide on 3–5 representative prompts for your use case (technical explainer, marketing text, code, whatever).

- Run those directly in each tool you’re considering.

- Check for: clarity, factual accuracy, structure, and how much editing you’d need.

- If stealth / human-ness matters, run the outputs through a few detectors yourself and compare.

For “humanizing” content or testing what passes detectors, Clever AI Humanizer is worth putting into that pipeline. It’s more focused on making AI text read like natural human writing and tends to produce fewer of those obvious “AI tics” Walter gets called out for. Not magic, not an oracle, but a better fit if your goal is to see how well text holds up under scrutiny.

- So, is it safe for your use case?

- Just browsing public info or non-sensitive blurbs: mostly fine, but temper your expectations.

- Pasting in reports, class papers, client work, or proprietary content: I would not.

- Using its “reviews” as your main decision-maker on what AI tools to adopt: also no. Use it, if at all, as a side note, not as the deciding factor.

tl;dr: Walter Writes Ai Reviews feels more like a glossy AI opinion generator than a rigorous evaluator. Combine your own tests, a couple of detectors, and a tool like Clever AI Humanizer if you care about natural-sounding output, instead of outsourcing the whole judgment call to Walter.

Short version: I’d treat Walter as a toy or a second opinion, not as a serious evaluator or a safe place for anything sensitive.

A few angles that complement what others already showed:

1. “Review” quality in practice

What bothered me most is not just the template feel that @sterrenkijker and @mikeappsreviewer pointed out, but the directional bias. Walter tends to skew either overly positive or overly negative without clearly tying its verdict to concrete evidence like latency, cost per 1k tokens, or benchmark accuracy. That makes it more of a vibe generator than a reviewer. I actually think @viajantedoceu is a bit generous in treating it as a discovery tool; if you do use it that way, double check every big claim against the actual product pages.

2. Risk profile, not just “privacy”

Instead of asking “Is it safe?” I’d frame it as “What is the worst thing that can happen if my text leaks or is reused?”

- Class assignment or generic blog post: annoyance level only.

- Client docs, novel draft, internal strategy: potentially serious.

Given the vague retention and the aggressive legal language, Walter lands in the “do not risk anything you would not post in a public Discord” bucket for me.

3. Unstable detector footprint = weak judge

Everyone already showed that Walter’s own outputs jump all over AI detectors. I’d add: the consistent quirks like odd semicolons and word repetition are exactly the features that corrupt your own evaluation. If you paste text from another AI into Walter and then rely on its “review,” you are basically letting one noisy system comment on another, with Walter’s style noise layered on top. That is not much better than eyeballing it yourself.

4. Where Clever AI Humanizer actually fits

If your real goal is “make AI text read more naturally and see how tools compare,” then Clever AI Humanizer is closer to the job description, as a component in your workflow.

Pros of Clever AI Humanizer:

- Tends to produce more humanlike rhythm and sentence breaks, so you spend less time manually de-robotizing text.

- More stable behavior across similar prompts, which helps when you are comparing multiple AI tools side by side.

- Works well as a last-pass editor when you already know the content is correct and just want it to sound less like a model.

Cons of Clever AI Humanizer:

- It is still an AI writer, not a magic cloak. If the underlying content is shallow, it will just sound like a more fluent version of the same thing.

- Detector performance is better than Walter’s in a lot of reported tests, but not bulletproof, so using it to “beat all detectors” is unrealistic.

- You still need your own judgment; it will not tell you which tool is “best,” only help your final text feel more organic.

5. How I’d actually use these tools together

- For evaluation: run your own fixed set of prompts directly in the tools you care about, then optionally pass the outputs through Clever AI Humanizer to normalize style before you compare readability. Ignore Walter’s star ratings or sweeping conclusions.

- For risk management: anything confidential or valuable stays out of Walter completely. If you must use a humanizer in that context, keep the text anonymized and stripped of identifying details.

Bottom line

Walter Writes AI Reviews is fine if you think of it as an opinionated AI blogger. It is not fine as a trusted benchmark or a safe vault for meaningful data. For improving actual readability and doing your own comparisons, something like Clever AI Humanizer plus your own test prompts is simply a more controlled and transparent approach, even if it is not perfect either.