I’m struggling to find a reliable AI humanizer in 2026 that can make AI-generated text sound natural enough to pass manual reviews and basic AI detectors. I’ve tried a few tools, but the outputs still feel robotic or get flagged. Can anyone share which AI humanizers actually work well now, what features to look for, and any tools or workflows you personally recommend?

Best AI Humanizers I Tested In 2026

Real use, not theory

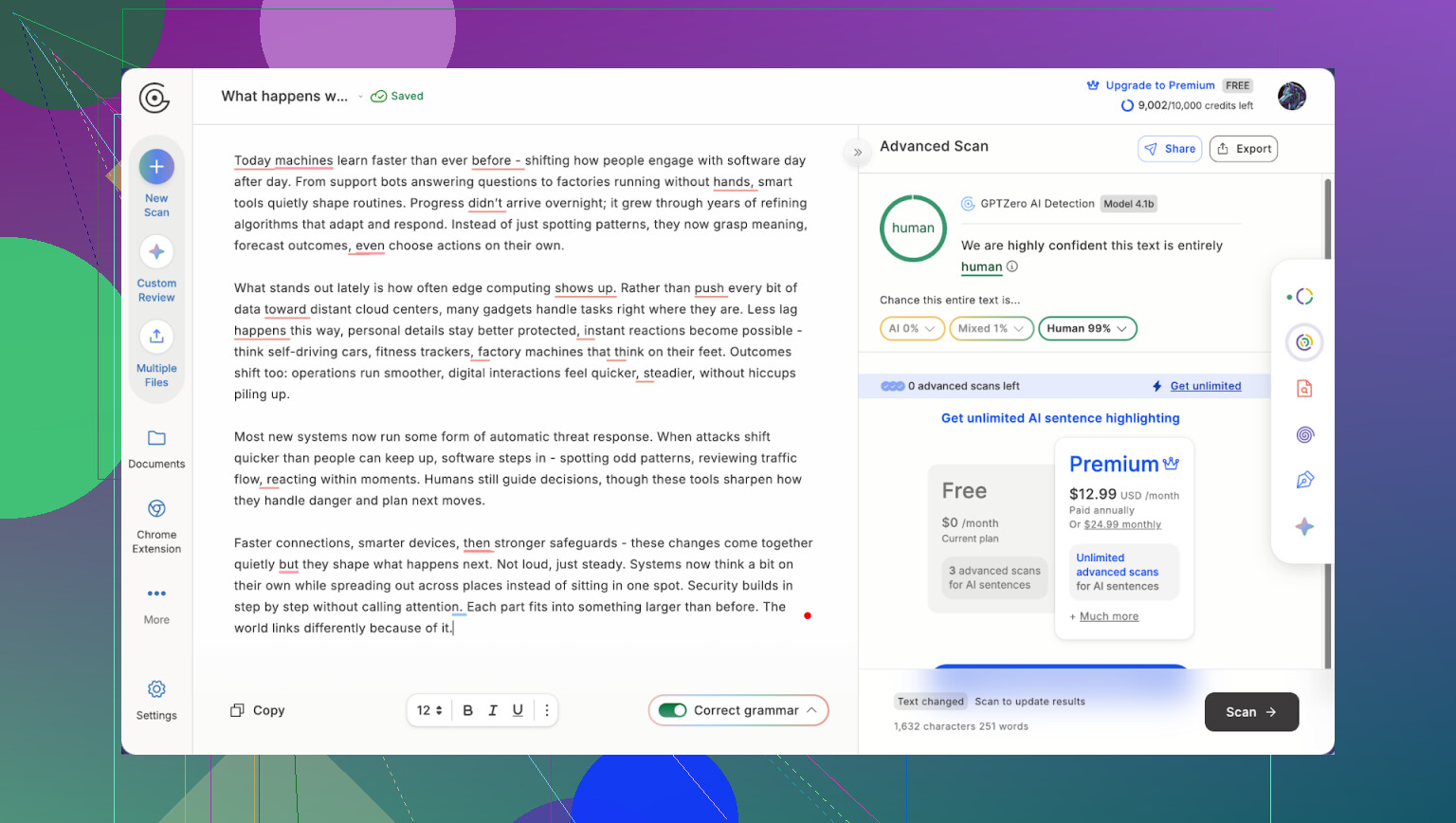

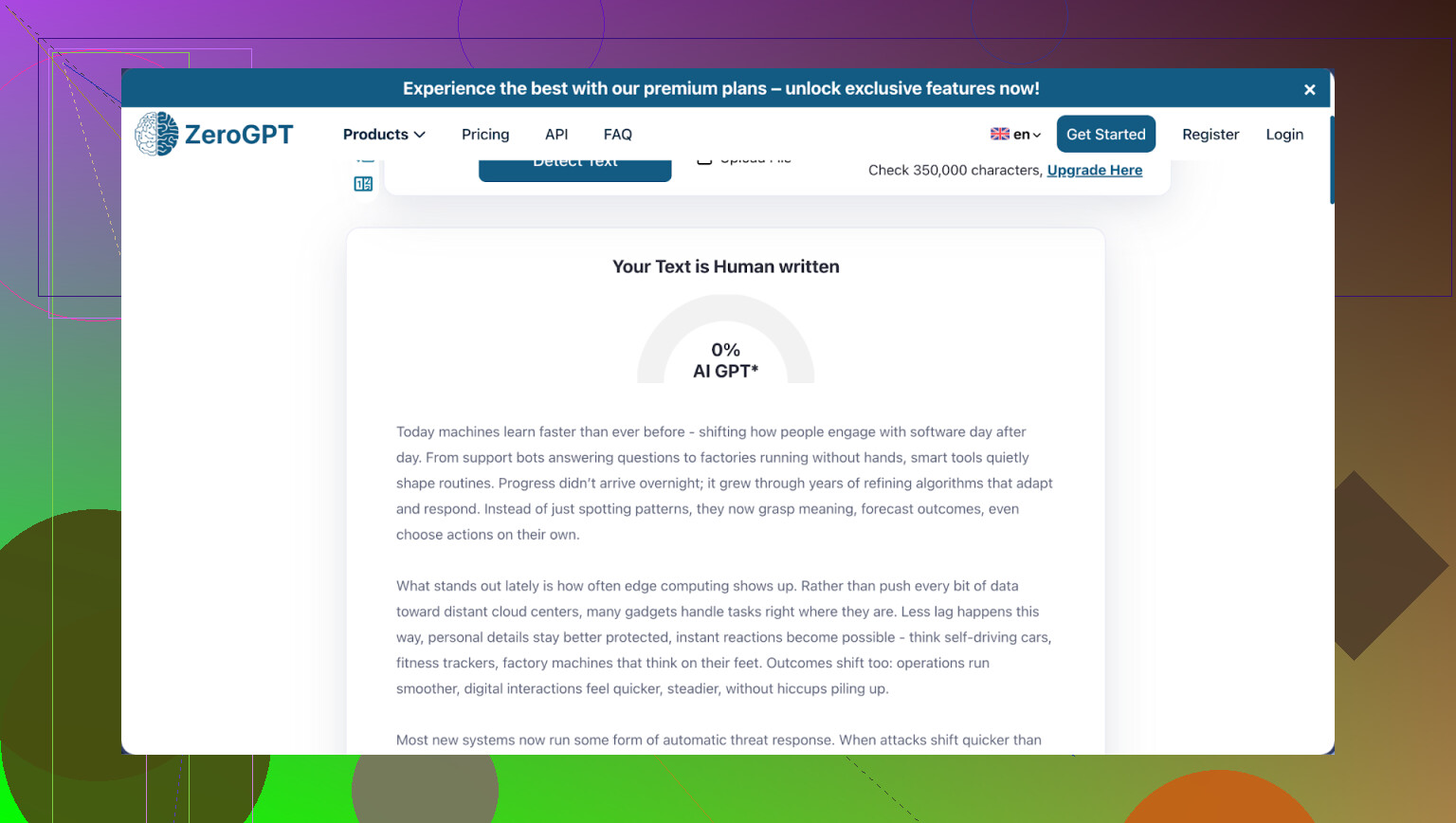

I spent a few weekends running the same ChatGPT text through every AI “humanizer” I could find. Ended up with 15 plus tools, two detectors, and a spreadsheet that looked like a bad science project.

Process was simple enough:

- Generate baseline text with ChatGPT.

- Run that through each humanizer.

- Test the outputs on GPTZero and ZeroGPT.

- Score writing quality by hand.

- Check pricing, limits, and terms.

Some glossy “premium” tools fell on their face at step 3. A few quiet ones did better than I expected.

Here is what stuck.

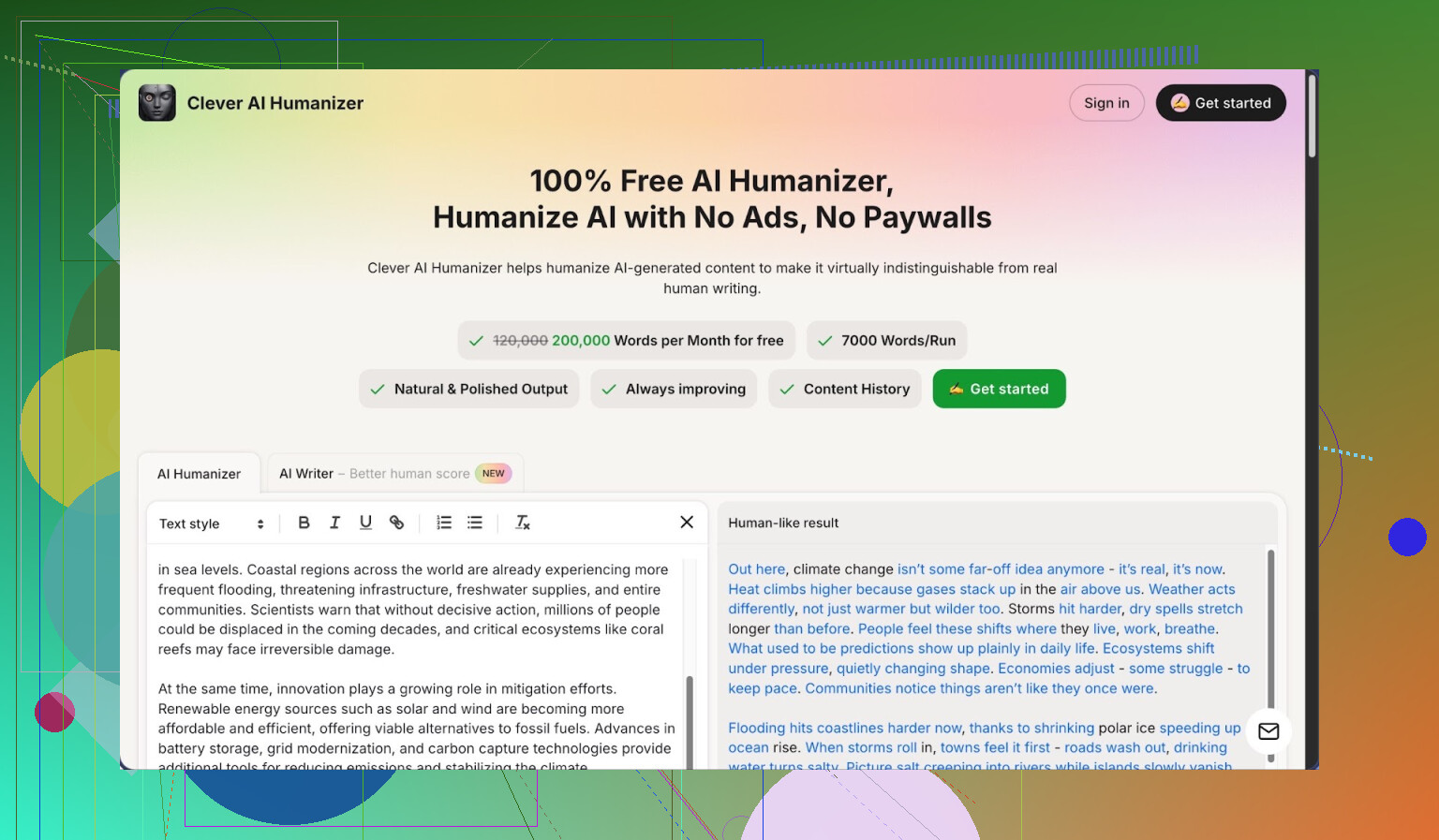

- Clever AI Humanizer

My default recommendation right now

Best for

Students, freelancers, and anyone who needs a lot of text “de-AI’d” without paying for every paragraph.

Rough scores from my runs

Detection: 7 out of 10

Writing quality: 8 out of 10

Site: https://cleverhumanizer.ai/

Why I keep going back to it

Most “free” tools gave me 150 to 300 words, then hit a wall or paywall. Clever AI Humanizer gives 200,000 words per month on the free tier, and it handled 7,000 words per run for me without choking.

No card, no watermark, no weird crippled model. Same engine for free users as far as I can tell. The devs behind it, Clever Files, have a habit of launching things free to get users, so this fits that pattern.

Modes I actually used

It has four modes, and they are not cosmetic. Output tone shifted in predictable ways.

• Casual

This one felt closest to how I write when I am not overthinking. Shorter sentences, fewer “AI” tics. On ZeroGPT, I was getting clean passes most of the time. On GPTZero, it did decently for non-technical content.

• Simple Academic

I pushed a few research style paragraphs through this. It kept the terminology but dialed down the “perfect” structure. That helped with detectors and made it easier to mix with my existing notes.

• Simple Formal

I used this for emails and short reports. Reads like a normal professional who is not trying to impress a committee. No fake “Dear Sir or Madam” energy.

• AI Writer

Different thing entirely. You feed it a prompt and it writes from scratch, avoiding the patterns detectors look for. I tested this with ZeroGPT and the scores stayed in the safe range for my samples. Needed light edits for voice, not for grammar.

The main point, I did not need to re-edit whole paragraphs to fix broken sentences or logic jumps. That alone puts it above half the tools below.

Pros I noticed

• 200,000 words per month free

• 7,000 word limit per run, biggest I ran into

• ZeroGPT gave me perfect or near perfect “human” on all my test runs

• Output reads like something you could send to a teacher or client without being embarrassed

• Keeps history of your past runs, useful when you forget what version you used

• No card needed to use it properly

• They push updates often, quality over time went up, not down

• Interface is simple, no 20 sliders and 10 checkboxes

Cons

• GPTZero still tripped on some pieces, especially long, technical ones

• No paid “pro” plan, so if you somehow need more than 200k words a month from one account, you hit the ceiling

Pricing

Free, at the time I tested.

Extra reading if you want receipts

Reddit review: https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/

Longer breakdown with screenshots and detector results:

Big Reddit thread about Humanize AI tools generally:

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

Video walkthrough of tests I saw shared a lot:

Other AI humanizers I tried

Quick notes, no marketing, just how they behaved for me.

Undetectable AI

Review:

https://cleverhumanizer.ai/community/t/undetectable-ai-humanizer-review-with-ai-detection-proof/28/

The tool seems obsessed with detectors and forgets the text is meant for people.

Detection: around 7

Writing quality: around 5

What went wrong for me

• It rewrote my samples so hard the grammar snapped in places

• Logical flow broke, sentences repeated ideas or contradicted each other

• I spent more time repairing the “humanized” version than refining the original AI text

• Tons of switches and sliders, but not enough restraint in how it rewrites

• Refund terms felt tight, and the data wording in their policy was vague

Grubby AI

Review:

Looked like it had been tuned too heavily on specific detectors.

Detection: ~6

Writing: ~6.5

What I saw

• Detector-specific modes lock you into chasing one tester at a time

• Small changes in input caused big swings in output reliability

• Built in checker made it look safer than it was, external tools disagreed

• Free tier barely usable, limits too low to run honest tests without paying

HIX Bypass

Review:

https://cleverhumanizer.ai/community/t/hix-bypass-review-with-ai-detection-proof/37/

Single trick behavior.

Result pattern

• ZeroGPT passed my text

• GPTZero flagged it, every time, on the same content

Output issues

• The writing stayed flat and mechanical

• AI style punctuation survived, like perfect parallel commas and robotic rhythm

• I had to hand edit quite a lot to get it to read like me

Walter Writes AI

Review:

https://cleverhumanizer.ai/community/t/walter-writes-ai-review-with-ai-detection-proof/26/

This one writes clean sentences but detection scores bounced.

Scores from my runs

• Writing: close to 8

• Detection: around 5, with random variance

Details

• It reads like normal English, which is good

• But bypass reliability was weak, same input sometimes looked much more “AI” to detectors

• Free tier ran out fast

• Paid plans still limit how many runs you get, so you think about every click

StealthWriter AI

Review:

https://cleverhumanizer.ai/community/t/stealthwriter-ai-review-with-ai-detection-proof/23/

Feels like it focuses on not changing the length of your text.

My numbers

• Detection: about 4

• Writing: around 6.5

What felt off

• Word count stayed close to the source, which looks good at first

• GPTZero flagged almost everything anyway

• Built in detector painted a nicer picture than external tests

• Pricing felt high for the performance

• No refunds, which is rough once you see real detector results

BypassGPT

Review:

https://cleverhumanizer.ai/community/t/bypassgpt-review-with-ai-detection-proof/39/

Cheap way to pass ZeroGPT, not much else.

Pattern I got

• ZeroGPT liked it

• GPTZero rejected most of the same runs

Problems

• Grammar slips showed up early in longer content

• AI-ish punctuation stayed put

• Free tier was tiny, mostly for a taste rather than real usage

NoteGPT

Review:

https://cleverhumanizer.ai/community/t/notegpt-ai-humanizer-review-with-ai-detection-proof/35/

Note platform first, humanizer bolted on.

Scores

• Writing: close to 8

• Detection: around 2

My experience

• Outputs read fine to a person

• Both GPTZero and ZeroGPT consistently marked the results as AI

• Tuning controls changed style a bit, but did nothing for bypass rates

TwainGPT

Review:

https://cleverhumanizer.ai/community/t/twaingpt-humanizer-review-with-ai-detection-proof/36/

Feels like a ZeroGPT-specific helper.

Detector behavior

• ZeroGPT passed my samples

• GPTZero flagged them as AI

Text quality

• Sentences were choppy, with repeated phrasing

• I spent extra time smoothing transitions and trimming repetition

• Fine for short snippets, annoying for long form writing

Phrasly

Review:

Good “editor”, poor “humanizer”.

Scores I saw

• Writing: around 7

• Detection: near zero

Observations

• It polishes your writing, like a paraphraser

• Detectors still flagged everything as AI, regardless of settings

• Free tier disappeared almost instantly, limits were harsh

Decopy AI Humanizer

Review:

This one advertises as free, but the outputs I got were rough.

Detector behavior

• GPTZero called every test 100 percent AI

• ZeroGPT scores went from “kind of okay” to “terrible” with no pattern

Writing feel

• Grammar was not broken, but the language came out childish and oversimplified

• You would have to rewrite most of it by hand to use it in anything serious

Originality AI Humanizer

Review:

I tried this, then stopped bothering.

What happened

• GPTZero and ZeroGPT both flagged every single output as AI

• Changes were tiny, more like a light paraphrase

• Obvious AI punctuation and em dashes stayed untouched

End result, you feed AI text in and get AI text out with small cosmetic edits.

Full review:

Good looking site, weak reliability.

Detector results

• GPTZero flagged all of my test pieces as 100 percent AI

• ZeroGPT was inconsistent, one pass looked decent, the next was max AI on the same style

Other issues

• Grammar slipped in unexpected places

• Readability dropped often, like it was forcing token changes

• Privacy policy language felt vague and not reassuring

Review:

My notes here are blunt.

Experience

• Outputs felt awkward, with obvious errors and clunky phrases

• Detector results jumped all over, no stable pattern

• Overall performance looked amateur, especially next to tools like Clever AI Humanizer

UnAIMyText

Review:

On paper it sounded promising. In practice it was hard to use seriously.

Detector behavior

• GPTZero flagged every single “humanized” piece as 100 percent AI

Output quality

• All three modes I tried produced broken phrases, weird wording, and grammar problems

• I would not send that text to an editor unless I planned to pay them overtime

If you want one takeaway

For now, if your goal is a mix of:

• decent detector performance on mainstream tools,

• readable output that does not need major surgery,

• and a free tier that is not a bait,

Clever AI Humanizer at https://cleverhumanizer.ai/ is the only one from this batch I kept in my own workflow. The rest are either niche, unstable across detectors, or produce text that needs more cleanup than the base AI output.

You are running into two separate problems and most tools only try to solve one.

- Detector patterns.

- Human patterns.

A lot of what @mikeappsreviewer shared matches what I see, but I disagree a bit on relying so much on ZeroGPT and GPTZero scores as the main goal. Manual review is your real bottleneck. Detectors change. Humans stay picky.

Here is what has worked well for me in 2026.

- Use a solid “base” humanizer, not ten of them in a chain

For that role, Clever Ai Humanizer is also my top pick right now.

Reasons that matter in practice, not marketing:

• It handles long inputs without chopping logic. I pushed long blog posts and essays and the structure stayed intact.

• The Casual and Simple Formal modes sound close to how a normal person writes when they are not trying to sound like a thesis.

• It does enough change to disturb detector patterns, but not so much that grammar falls apart.

I would avoid stacking multiple humanizers. Every extra rewrite tends to

• inflate weird synonyms

• break pronoun references

• introduce tense drift

If you must re run, run it once through Clever Ai Humanizer, then do manual edits yourself.

- Stop feeding it “perfect” AI text

If your base AI text is hyper structured, with flawless topic sentences and perfect transitions, every humanizer struggles. Before you paste it in, do this quick “roughening” step yourself:

• Shorten some sentences manually.

• Add one or two side comments.

• Change some generic words to your own go to phrases.

• Remove some rigid headings.

That alone drops detector scores a bit and makes the humanizer’s job easier.

- Mix in your own fingerprints

Detectors and human reviewers both look for consistency. You want your usual quirks in there. For example, I always:

• Use the same few filler phrases I use in real emails.

• Keep 1 or 2 mild typos or double spaces in long docs.

• Change at least one example to something from my real life or niche.

Run text through Clever Ai Humanizer, then spend 3 to 5 minutes “personalizing” like this. That fixes most of the robotic feel.

- Stop chasing a 0 percent AI score

This is where I part ways a bit from how tool reviews are often framed, even good ones like from @mikeappsreviewer. If you aim for “100 percent human” on every detector, you end up with:

• broken grammar

• odd word order

• unnatural synonym spam

Aim for “low to moderate AI” plus strong readability. Many manual reviewers accept some AI assistance as long as it sounds like one consistent person and the content is accurate.

- Test on a human, not only detectors

Quick check that helps more than another tool:

• Read your “humanized” piece out loud. If you trip a lot, it still sounds like a machine.

• Send one sample to a friend or coworker and ask, “Does this sound like me, or like a bot cleaned this up”.

If they say “Weirdly generic”, take that feedback back into your base prompt and your editing habits, not into another humanizer.

Concrete setup that works well:

• Generate initial content in your AI of choice with a loose, conversational instruction.

• Lightly roughen it yourself.

• Run through Clever Ai Humanizer in Casual or Simple Formal mode.

• Do a fast pass to insert your usual phrasing, 1 or 2 small typos, and one real example.

• Spot check with one detector if you want, but judge by your own read first.

You will get better results from that single tool plus your edits than hopping across five “bypass” apps that all promise magic.

Short version: there isn’t a magic “press button, pass all detectors forever” tool in 2026, but there is a sane combo of tool + workflow that’s good enough for most manual checks and the usual GPTZero / ZeroGPT stuff.

I’ll skip repeating the step‑by‑step that @mikeappsreviewer and @nachtdromer already walked through, since they basically lab-tested half the market. I agree with both of them on one core thing: if you’re going to pick a single humanizer as your base, Clever Ai Humanizer is the one that actually earns that slot right now.

Where I slightly disagree with them:

- I wouldn’t obsess over detector scores as much as @mikeappsreviewer did. Those tools get updated, your text doesn’t. Chasing “0% AI” on every run usually wrecks readability.

- I also think @nachtdromer underplays one thing: some use cases still care a lot about consistent detector passes first, quality second. If you’re in that camp, you probably will test with GPTZero / ZeroGPT whether people like it or not.

Here’s what’s realistically working for me in 2026:

-

Use Clever Ai Humanizer as the last step, not the first

I’ve had better luck writing my base draft with ChatGPT or Gemini in a more casual prompt, doing a quick 2–3 minute manual cleanup, then running through Clever Ai Humanizer in Casual or Simple Formal.

Whenever I tried chaining tools (like “AI → paraphraser → humanizer → another bypass”), the text started sounding like it had been translated through 4 languages. Detectors sometimes liked it more, but humans didn’t. -

Keep the humanizer runs short and focused

This is one spot where I diverge from the “just throw 7k words in one go” mentality.

Long multi‑section docs in one pass tend to come out a bit samey in tone. I’ve had better results doing:- Section by section (like 800–1500 words) through Clever Ai Humanizer

- Then I manually vary intros and transitions between sections

That keeps it from having that weird uniform AI flavor across 10 pages.

-

Let some AI-ness survive

People go too extreme. If you take “must sound human” to the limit, you introduce fake mistakes and awkward synonyms that no real person uses that often.

What has worked better for me:- Accept that detectors might say “mixed” or “some AI”

- Make sure the text reads like one actual person, with consistent phrasing and habits

Manual reviewers tend to care more about plagiarism and nonsense than whether a detector says 18% AI.

-

Use Clever Ai Humanizer differently for different content types

Less talked about, but it matters:- Essays / school stuff: Simple Academic, then you sprinkle in your own typical phrases, and change examples to your real life. Detectors often hate when examples are super generic and polished.

- Client emails / reports: Simple Formal, and then intentionally shorten a few sentences and add one slightly imperfect sentence. Nobody writes corporate emails in textbook English every time.

- Blog posts / casual content: Casual mode, but I usually cut 5–10 percent of the “filler clarity” sentences that AI loves. Human writers are lazier with signposting every single point.

-

Don’t use humanizers to fix bad prompts

This is the trap I see a lot:- People let ChatGPT produce ultra‑stiff textbook paragraphs

- Then expect a humanizer to magically turn it into something that sounds like a sleep deprived college student or a busy freelancer

If your base text is robotic and overstructured, every tool will struggle. Prompt earlier for: - Shorter paragraphs

- Slightly messy structure

- A more personal angle

Then let Clever Ai Humanizer handle the pattern-breaking instead of a total personality transplant.

-

When not to bother with a humanizer at all

I’ll be blunt: for highly technical docs, research summaries, or code explainers, I often skip humanizers completely and just do:- AI draft

- Heavy manual edit for voice

- Maybe a light paraphrase in small spots that feel too “model-ish”

Those kinds of docs are naturally dry, and humanizers sometimes overcompensate and make them look dumber or wrong. Detectors also tend to be more suspicious of deeply technical stuff no matter what.

If you want something concrete instead of theory:

- Stick with Clever Ai Humanizer as your one “real” humanizer.

- Run sections, not whole books, through it.

- Accept “low to moderate AI” on detectors rather than nuking the writing quality.

- Spend 3–5 minutes after each run making it sound like you, not like “generic native English copywriter.”

That combo has gotten me through manual reviews and basic detector checks way more reliably than bouncing around ten different “bypass” brands that brag about 0% AI and then spit out text that reads like a drunk thesaurus.

Skipping what @nachtdromer, @espritlibre and @mikeappsreviewer already covered about testing setups, here’s the angle I haven’t seen spelled out yet: pick one humanizer as your “engine,” then design your workflow and risk level around that, instead of tool‑hopping.

1. Is there a “best” humanizer in 2026?

In practice, for most people, yes: Clever Ai Humanizer is the most balanced option right now. The others that keep getting mentioned are either:

- tuned too hard for specific detectors and mangle logic

- or polish the wording but barely move detector scores.

I don’t totally agree with the “just accept whatever detector says” stance, but I also disagree with chasing perfect 0 percent on every single check like @mikeappsreviewer did in their spreadsheet marathon. You want “low suspicion plus sane writing,” not a statistical miracle.

2. Pros of Clever Ai Humanizer (beyond what’s already been said)

Some points that matter in real use, not just in tests:

Pros

-

Stable style across long projects

If you are working on a series of essays or articles, it tends to keep the same general voice. That matters more for manual reviewers than nudging a detector from 14 percent to 2 percent. -

Good at preserving structure

Where a lot of tools reorder sentences or chop paragraphs, Clever Ai Humanizer usually keeps your outline and just alters phrasing and rhythm. For assignments and client work that follow a required template, this is huge. -

Low “synthetic metaphor” rate

Many bypass tools inject weird analogies or forced “creative” language to look human. Clever Ai Humanizer mostly avoids that, which keeps you out of the uncanny valley where the text sounds like BuzzFeed pretending to be a research paper. -

Modes that are actually distinct

Casual vs Simple Formal vs Simple Academic are meaningfully different, so you can tune tone to your context without manually rewriting every paragraph.

3. Real cons you should factor in

People gloss over the downsides, so here is the blunt list:

Cons

-

Not great for highly technical or niche jargon

It sometimes softens terms or slightly rephrases domain‑specific language in a way that feels “off” to experts. For scientific or legal writing, I would only use it on intros, transitions and explanations, not on the dense core. -

Detector performance is uneven across content types

Narrative or general essays tend to come out fine. Dense bullet‑heavy or formulaic stuff trips detectors more. So if you write a lot of checklists or step‑by‑step guides, you will still need manual tweaks. -

Tone can drift toward neutral corporate

Even in Casual mode, after many pages, your text starts to sound like a competent but generic content writer. If you have a very strong personal voice, you will want to do a final pass to re‑inject your quirks. -

No advanced control for “aggressiveness”

Unlike some competitors that offer sliders for “change level,” with Clever Ai Humanizer you do not get fine‑grained control. For some people that simplicity is a plus; for power users it can be limiting.

4. How I’d actually use it differently than others here

Where I disagree slightly with the others:

-

I would occasionally run long chunks (3k–5k words) in one pass, but only after I have already broken the repetitive AI patterns myself. This keeps cohesion. Then I tweak intros and conclusions of each section manually to avoid the “same opening line every time” issue.

-

I do not rely on it as the absolute last step. My best results:

AI draft → quick personal edit → Clever Ai Humanizer → short final human pass focused only on voice and small imperfections.

If you want to stop fighting tools and just get something workable:

- Use Clever Ai Humanizer as your main mover.

- Reserve other tools for niche cases, not as a chain.

- Accept “low AI probability + clean prose” instead of burning hours chasing 0 percent.

That mix has been more reliable for actual reviewers than any single perfect detector score.